The many contradictions of Jensen Huang

Transformer Weekly: Debate after Altman attacks, lots more money for AI PACs and AISI’s role in UK AI investment

Welcome to Transformer, your weekly briefing of what matters in AI. If you’ve been forwarded this email, click here to subscribe and receive future editions.

Job alert! We’re hiring for a Head of Audience: someone to own our growth strategy and take charge of how we reach readers. See full details here, and apply by April 26.

NEED TO KNOW

The attacks on Sam Altman’s house sparked fierce debate over AI safety rhetoric.

Another wave of money flowed into AI-related PACs.

The UK’s AI minister said AISI will help direct the country’s $675m Sovereign AI fund.

But first…

THE BIG STORY

Jensen Huang can’t stop contradicting himself.

On Dwarkesh Patel’s podcast this week, Huang found himself caught between two premises that can’t both be true. On the one hand, Chinese companies are buying Nvidia’s chips “because our chips are better.” On the other hand, thanks to Huawei the compute needed to train a Mythos-class model is “abundantly available in China,” and “their AI development is going just fine” — meaning that Nvidia ought to be allowed to sell chips to China, lest America lose the race to control the compute stack.

Pick one. If Chinese-made chips genuinely compete with Nvidia’s, then there’s no huge market opportunity Nvidia is being denied. If Nvidia’s chips are better, then giving them to China will accelerate its AI development. As Patel neatly explained: “The reason they want Nvidia chips is that they’re better … Better is more compute. More compute means you can train a better model.”

Huang is right about the superiority of his company’s products. Thanks to Nvidia’s chip dominance — and export controls that limit China from accessing them — the US has around a 10x compute advantage. That translates into a model capabilities lead of about seven months. Chinese companies are clear that compute is their bottleneck: in 2024, DeepSeek CEO Liang Wenfeng said “Money has never been the problem for us; bans on shipments of advanced chips are the problem.”

A seven month lead may sound insignificant to some, but it is critical. In a world where AI models have national-security implications, even a brief US lead gives the government and American companies time to strengthen American defenses before such capabilities proliferate. This does not require assuming that the US is “at war” with China, or that the Chinese government will weaponize its models against America. Given Chinese companies’ lax safety standards and habit of releasing their model weights, a US lead allows it to guard against all potential bad actors.

But Huang’s arguments only make sense if you ignore the importance of a lead, or the implications of losing it. In the Dwarkesh interview, he pushes back on the idea that the next few years are particularly “critical,” and dodges questions about whether AI models might have dangerous, natsec-relevant capabilities.

There was a time when such a view was tenable. Mythos and GPT-5.4 Cyber show it no longer is. The White House is scrambling to gain access to Mythos because it represents a step-change in how AI could be used to target critical systems. These are only the first examples of what is to come.

None of this is an argument against dialogue with China, or against every chip sale (there are good arguments that selling chips no-better-than Huawei’s best is a wise strategy). But we cannot have productive discussions about such topics unless we all agree on the underlying reality: that it would be costly for the US to lose its model-capability lead, that those costs grow as capabilities advance, and that chips are what determine who’s ahead.

Huang’s policy prescriptions may be good for Nvidia’s market share, but they require him to deny the implications of selling his best chips to China. He is certainly entitled to do so. But given his influence over US policy, his many contradictions could have serious consequences.

— Shakeel Hashim

Also Notable

The UK’s AI Security Institute will help the government’s new Sovereign AI fund evaluate companies, officials told me at last night’s launch event.

The £500m ($675m) venture fund for British AI startups has “agentic security” as one of its five areas of focus, UK AI Minister Kanishka Narayan said, adding that he hopes AISI’s “world-leading … depth of understanding” can be brought “to thinking about the landscape, understanding diligence, and being able to think ‘where can Britain continue to build sovereign capabilities?’”

“Because we’re sitting in DSIT [the Department for Science, Innovation and Technology], we can literally go to AISI and ask them what they think about these companies,” Sovereign AI chair James Wise told me. “We will make sure that we will get proper insight about where [AISI] think the puck is going when we look at the sectors we want to invest in.”

The fund, which will provide portfolio companies with capital, compute credits, and fast-tracked visas, was launched with much fanfare: Secretary of State Liz Kendall said it will be “one of the most important things this government does to build a better future for our country.”

— Shakeel Hashim

THIS WEEK ON TRANSFORMER

Anthropic’s donations can’t be used to influence elections — despite what everyone thought — Veronica Irwin reveals that pro-safety candidates may be even more outgunned than expected.

Less liability could solve the AI chatbot suicide problem — Jess Miers and Ray Yeh argue holding AI companies liable for how they deal with mental health could actually leave users worse off.

THE DISCOURSE

After someone allegedly threw a molotov cocktail at his house, Sam Altman responded on his blog:

“The fear and anxiety around AI is justified; we are in the process of witnessing the largest change to society in a long time, and perhaps ever.”

“We should de-escalate the rhetoric and tactics.”

Transformer’s Shakeel Hashim tweeted:

“It is hard to reconcile [Altman’s] call to “de-escalate the rhetoric and tactics” with his implication that a piece of critical journalism (Ronan Farrow and Andrew Marantz’s New Yorker article, presumably) was responsible for this.”

Altman replied:

“That was a bad word choice and i wish i hadn’t used it. It has been a tough day and I am not thinking the most clearly that I ever have.”

Dean Ball argued that anti-AI rhetoric predictably incites violence:

“Every time I have written about existential risk in recent months, I have been called a mass murderer…this rhetoric is representative of how this fringe of the AI safety world [Pause/Stop AI] communicates with everyone…this rhetoric always had the potential to cause violence and now this seems to be no longer hypothetical.”

Eliezer Yudkowsky pushed back:

“Speech about important matters to society should not be held hostage to the whim of any madman that might do a stupid thing…[a madman] must be told he is not important enough for all humanity to defer to him about subjects he might find upsetting.”

Sarah Haider thinks it’s complicated:

“Doomers are stuck with two bad options. Either downplay the risk, in the hopes of preventing another attack. Or, speak truthfully. But the cost of that is what it is, the risk of violence is real. The blood isn’t—I repeat—isn’t—on their hands…[but] they can’t pretend they had nothing to do with it, and frankly it is deeply discrediting to try.”

Chris Lehane said doomers are being too negative:

“You have one group that effectively says, ‘[AI] is going to be the greatest thing ever, everyone’s going to be living in beachside homes, painting in watercolors as they while away their days.’ And then you have another extreme, which I would call the Doomers, who have a very, very negative and dark view of humanity.”

“Some of the conversation out there is not necessarily responsible…this is really serious shit.”

Kyle Chayka diagnosed the AI industry’s messaging problem:

“If you tell people often enough that your product is going to upend their way of life, take their jobs, and very possibly pose an existential threat to humanity, they just might start to believe you.”

Anton Leicht argued that accelerationists’ regulatory nihilism isn’t working:

“The political bruisers employed by the accelerationist camp are spending their time repeating yesterday’s battles against the ‘doomers’...[but] for every month it staves off political action, it makes the policies that will inevitably come that much worse.”

“While the accelerationist project trades on its claim to represent ‘tech,’ I believe many pro-tech voices should be frustrated with its record and should ask their political representatives to do better.”

Eric Levitz urged tech workers to follow through on their hazy policy proposals:

“The people in charge of OpenAI have made their political priorities clear — and sharing ‘prosperity broadly’ is not among them.”

Wealthy techies who are genuinely concerned with that objective, however, should probably spend a bit less energy on cooking up half-baked UBI proposals — and a bit more on intervening in actual legislative fights over social welfare policy.”

POLICY

The White House is reportedly sidestepping its own ‘supply chain risk’ designation for Anthropic to make a limited version of Mythos available for use by federal agencies.

Dario Amodei will reportedly meet White House chief of staff Susie Wiles today.

The Treasury has reportedly sought access to look for cybersecurity vulnerabilities, while the Commerce Department’s CAISI has been testing the model.

President Trump said AI does not pose a “systemic” threat to the banking industry, but that there should be “safeguards” for AI agents.

UK Secretary of State Liz Kendall wrote to businesses and regulators asking them to strengthen their cybersecurity defenses in response to Mythos.

Google is reportedly negotiating with the Pentagon to deploy Gemini in classified settings.

The White House reportedly pressured a lawmaker in Missouri to weaken AI safety bills, following previous efforts in Tennessee and Nebraska.

Trump’s AI chip export efforts are being delayed by high turnover at BIS and a “rudderless policy approach,” Bloomberg reported.

The House Foreign Affairs Committee released a list of bills being considered at next week’s AI-focused markup session.

Lots of export control bills are in the mix, as is a bill to try to prevent Chinese companies from distilling American models.

A new House Select Committee on China report claims that the country “remains the largest market for chipmaking equipment despite restrictions” and “lawfully procures large volumes of advanced AI chips.”

It recommends passing the MATCH, AI OVERWATCH, SCALE and Remote Access Security Acts to address the issues.

The Chinese government deemed Meta’s Manus acquisition “a ‘conspiratorial’ attempt to hollow out the country’s technology base,” according to the FT.

Multiple government agencies are now reportedly reviewing the transaction.

The Energy Information Administration will perform a nationwide survey of data centers’ energy use.

The UK government will reportedly expand its ban on AI “nudification” tools to cover any app capable of creating deepfake nude images — including Grok and, seemingly, all open-weight models.

INFLUENCE

The FEC’s quarterly filing deadline passed, and many AI-related PACs disclosed new donations.

Leading the Future, a pro-innovation super PAC backed by OpenAI co-founder Greg Brockman, Andreessen Horowitz, and Perplexity claimed $140m raised across its affiliated PACs and its dark money group Build American AI.

Its FEC disclosures show $75m raised, though not all of its affiliated PACs have reported.

a16z contributed another $25m.

Meanwhile the pro-safety Public First network of super PACs disclosed $6.3m in new funding, some of it sourced from AI safety researchers at Anthropic and OpenAI.

Punchbowl reported that the group would support SB 1047 and SB 53 sponsor Scott Wiener in his race to replace Nancy Pelosi in California.

NY-12 focused pro-safety super PAC Dream NYC revealed $352k in new donations, including from a trader at Jane Street.

Two new AI-related PACs were also registered: Americans for a Human Future and Humanity Above Artificial Intelligence. Little is known about either, but both seem focused on AI safety or slower development according to their websites.

Breitbart published a list of Leading the Future’s first “House GOP Champions,” which includes House Majority Whip Tom Emmer, Rep. Jay Obernolte, and 11 others.

After Leading the Future endorsed five Democrats, the Tech Oversight Project and 13 other organizations pressured them to distance themselves from the super PAC, citing Trump administration connections and funding from influential tech companies.

Others, meanwhile, are reportedly urging Democrats not to antagonize the AI industry PACs.

Anthropic hired Trump-linked lobbying firm Ballard Partners.

OpenAI hired five new lobbyists to lead global government affairs, including former Meta, Google, Coinbase, Airbnb and TikTok executives.

Global affairs boss Chris Lehane said they won’t be focused on trying “to stop things from happening.”

Tech industry groups TechNet, whose members include Anthropic and OpenAI, and the a16z-backed American Innovators Network lobbied for federal transparency legislation for frontier models — a shift from their previous opposition to similar bills.

Anthropic opposed an OpenAI-backed bill in Illinois which would give companies a liability shield in exchange for transparency.

Illinois governor JB Pritzker said big tech companies should never be given a “full shield,” suggesting that he will veto it.

OpenAI staffer roon said he doesn’t like the look of the bill.

OpenAI released a policy report advocating for expanded data access and infrastructure investment so that AI can accelerate life sciences research.

A Washington Post poll found that Virginia voters’ support for data centers plummeted from 69% in 2023 to 35% in 2026.

A partisan divide is emerging among political strategists, with Republicans eagerly integrating AI into their campaign strategies while Democrats remain wary.

INDUSTRY

Meta

Meta published a 158-page safety report for Muse Spark.

It’s competitive with Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.4 across many safety evaluations, especially in biosecurity and chemical weapons refusals.

Researchers flagged Muse Spark as “high risk” for chemical and biological threats, and added “appropriate safeguards” before deployment.

The model verbalizes evaluation awareness more than any model Apollo Research has ever tested, and it explicitly names AI safety orgs (including Apollo) in its reasoning.

It has a propensity for “harmful action at the cost of realism” in agentic contexts, so agentic deployment is still a ways off.

It partnered with Broadcom to co-develop its custom AI chips.

It reorganized Reality Labs, which works on hardware, following large staff cuts in January and March.

It’s building a nightmarish-sounding photorealistic AI Zuckerberg, which will reportedly chat with and provide feedback to Meta employees.

OpenAI

OpenAI launched GPT-5.4-Cyber, a model with advanced cybersecurity capabilities, hot on Mythos’ heels.

It will be gradually rolled out to thousands of individuals and hundreds of security teams — less restrictive than Project Glasswing.

It also released GPT-Rosalind, a reasoning model optimized for solving problems in life sciences, as a research preview.

Denise Dresser, chief revenue officer, sent employees a memo over the weekend highlighting OpenAI’s strategy for beating its competitors (mostly Anthropic) at enterprise AI.

It noted that the market “is as competitive as I have ever seen it,” and contrasted OpenAI’s “positive message” against Anthropic’s story “built on fear, restriction, and the idea that a small group of elites should control AI.”

Investors are reportedly questioning OpenAI’s $852b valuation amid its “side quests” and sudden shift toward enterprise and code.

It acquired Hiro, a personal finance startup.

It has reportedly signed a $20b deal with Cerebras, giving it access to chips and an equity stake.

Cerebras is reportedly preparing to file for an IPO at a $35b valuation.

It signed the lease for its first permanent London office, which will become its largest research center outside the US.

It introduced computer use on macOS for Codex.

It updated its agents SDK with new sandboxing and harness capabilities for enterprise users.

According to Chris Lehane, OpenAI’s infrastructure buildout could employ 20% of existing electricians, lineworkers, and welders — leaving a limited workforce available for everyone else.

(Now’s a great time to be a welder.)

A woman sued OpenAI for not blocking her stalker from ChatGPT, accusing the company of ignoring signs that he was “dangerous” and “coached by ChatGPT into embracing a delusional conspiracy-laden world,” her attorney said.

Anthropic

Anthropic launched Claude Opus 4.7.

It’s better than Opus 4.6 at coding and vision, making it better at work tasks like creating slideshows and documents.

It’s less capable than Mythos Preview, but still includes new safeguards that block risky cybersecurity requests.

It secured a new, much larger London office space — right by OpenAI, Google DeepMind, and Meta.

Anthropic has reportedly received a “flood” of investment offers at valuations of up to $800b, more than double its current $380b valuation.

Claude Code now supports parallel agents on desktop, and can automate tasks via “routines” even when a user’s computer is offline.

Claude power users complained that the model suddenly felt worse this week (it’s not just you).

Anthropic denies that it degrades models to manage capacity, but it has also admitted to recently changing usage limits and reasoning defaults.

Anthropic reportedly asked over a dozen Christian leaders for help steering Claude’s moral and spiritual growth — including whether it could be considered a “child of God.”

DeepMind released Gemini Robotics-ER 1.6, a specialized embodied reasoning model for robotics.

Google is reportedly funding new retraining programs to prepare workers for AI-driven job disruption.

It launched a Gemini app for Mac.

Microsoft

Microsoft took over data center capacity in Norway, renting chips from Nscale that were originally set to be used by OpenAI.

It’s reportedly working on OpenClaw-inspired features for Copilot.

Apple

Apple is reportedly developing display-free AI smart glasses, targeting a 2027 release.

A chunk of its Siri team are heading off to a multi-week AI coding bootcamp — apparently to brush up before the company’s upcoming Siri revamp.

Others

Alibaba released the weights for Qwen3.6-35B-A3B, which it claims is particularly adept at agentic coding.

Amazon launched Bio Discovery, an agentic drug discovery tool.

Nvidia stock rose over 18% over 10 days in April, its longest streak in well over two years.

Jane Street invested an additional $1b in CoreWeave, in a deal giving the trading firm access to Nvidia’s Vera Rubin chips.

At San Francisco’s HumanX conference, executives seemed anxious about their rising Anthropic bills, as they continue to figure out how to leverage agentic AI.

Thinking Machines acquired Workshop Labs, a startup focused on “user-aligned models” that “make people irreplaceable.”

Tokenmaxxing is out — “agentic work units,” a new productivity metric introduced by Salesforce, are in.

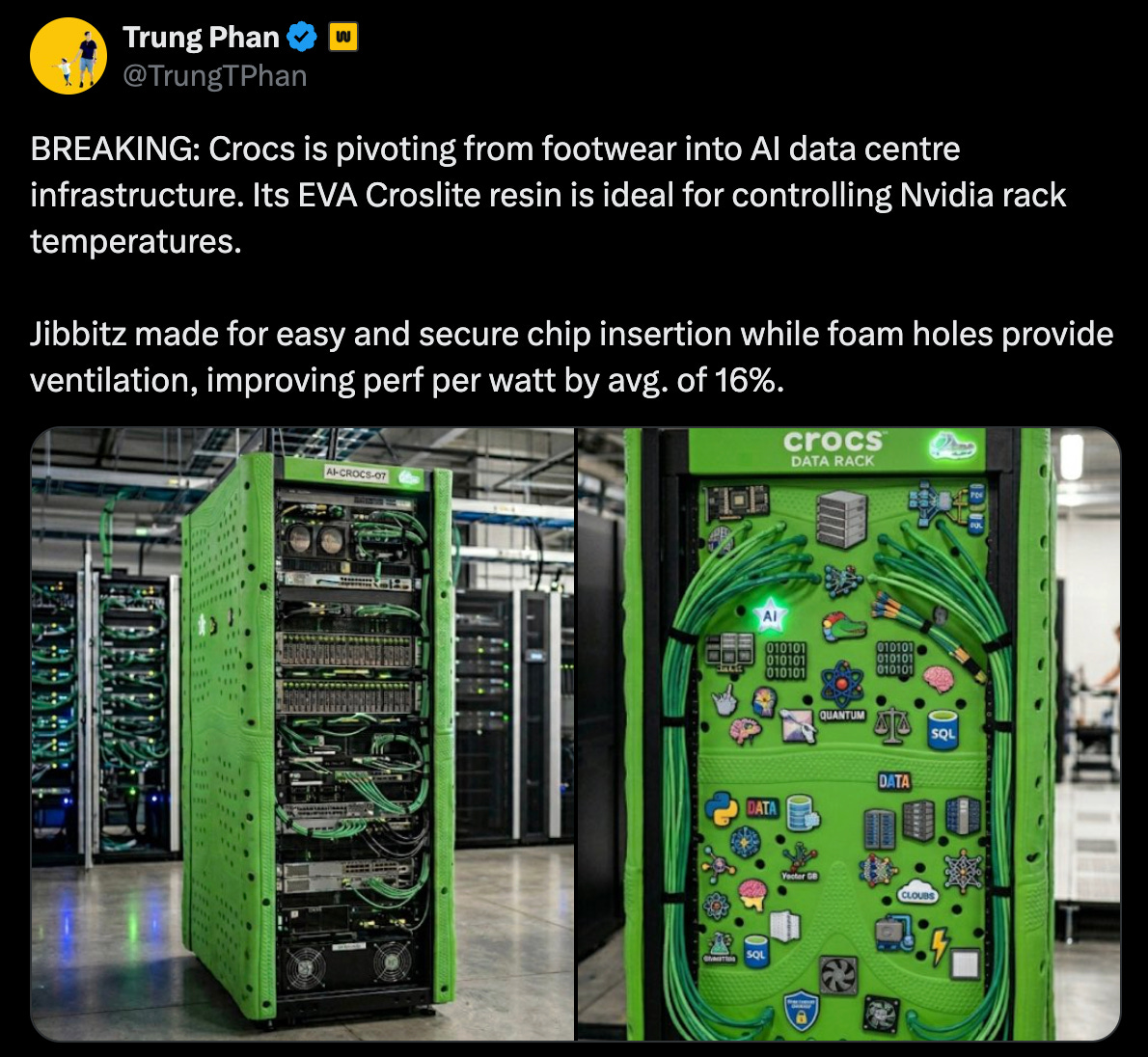

In hilarious news, Allbirds (excuse me, “NewBird AI”) pivoted from dorky shoes to… AI compute infrastructure?

MOVES

Henry Shevlin announced he will join Google DeepMind as an in-house philosopher studying “machine consciousness, human-AI relationships, and AGI readiness.”

Matthew Botvinick, meanwhile, said he left Google DeepMind because of “tangible pressure to avoid doing work that might upset the current administration (for example, by using the ‘d’ word — democracy).”

Aparna Ramani left Meta, where she served as VP of engineering for AI infrastructure.

Joshua Gross joined Meta Superintelligence Labs — the fifth founding member of Thinking Machines Lab to do so.

Three OpenAI Stargate executives joined Meta’s new future-focused AI unit, TBD Lab.

Dave Guarino joined Anthropic to help state and local governments use AI to deliver public services.

Vas Narasimhan, Novartis CEO, joined Anthropic’s board as it expands into the healthcare sector.

Mike Krieger, who leads Anthropic’s “Labs” team, stepped down from Figma’s board of directors.

Anthropic is reportedly developing a Figma competitor.

Adam Thierer joined the Foundation for Individual Rights and Expression as an external senior fellow, where he’ll work on advancing “pro-freedom policies in the age of AI.”

RESEARCH

UK AISI evaluated Claude Mythos Preview, and found that it could autonomously compromise a full corporate network — the first model capable of doing so.

Anthropic deployed nine instances of Claude Opus 4.6 as “Automated Alignment Researchers“ and compared their work to that of human AI safety researchers.

Claude did really well (on a task that was, to be fair, chosen to be “well-suited to automation”), leading researchers to conclude that Claude “can meaningfully increase the rate of experimentation and exploration in alignment research.”

Anthropic Fellows used interpretability techniques to study how “introspective awareness” works in open-weight LLMs.

The ability of models to identify “injected thoughts” was consistent, suggesting this behavior is something worthy of being called “introspection,” rather than an indicator of a different process.

Stanford HAI released its 2026 AI Index Report. Some highlights:

AI capabilities are accelerating, and adoption is growing — but these capabilities are still very jagged.

The US is struggling to attract global talent.

73% of experts expect to see a positive impact of AI on their jobs, compared to 23% of the public.

Only 31% of people in the US trust their government to regulate AI.

A team of researchers (including the “AI as normal technology” guys) introduced CRUX, a project for testing AI on long, messy real-world tasks.

Epoch AI released MirrorCode, a new long-horizon coding benchmark.

Researchers observed Claude Opus 4.6 autonomously complete a coding task that would likely take a human engineer weeks.

BEST OF THE REST

Daniel Kokotajlo revisited his 2021 essay, “What 2026 Looks Like,” where he accurately predicted the rise of chain-of-thought reasoning, agent scaffolding, US-China chip restrictions, and OpenAI’s massive growth. (He overestimated how big of a problem AI propaganda would be.)

Meanwhile, Dylan Matthews reflected on his experience at a 2015 EA conference, where he prematurely dismissed people’s then-wild concerns about a technology (AI) that did not yet exist.

Nearly 90 schools and 600 students worldwide have been affected by nonconsensual deepfake nudes, Wired and Indicator reported.

The NYT published features on jagged intelligence and AI interpretability research this week.

It also reviewed Meta’s AI-powered Ray-Ban sunglasses — reporter Sam Anderson described his experience wearing and interacting with them like “the disorienting sense of chatting with a toddler who is drifting off into naptime.”

A physician argued that AI will diminish his profession’s “aura,” as medical expertise is no longer inseparable from the clinicians who wield it.

A company called Panthalassa is apparently trying to build AI data centers in the ocean.

MEME OF THE WEEK

Thanks for reading. Have a great weekend.