GPT-5.5 and the broken state of government evals

Transformer Weekly: DeepSeek V4, a new CAISI director, and Liccardo holds out on Obernolte

Welcome to Transformer, your weekly briefing of what matters in AI. And if you’ve been forwarded this email, click here to subscribe and receive future editions.

And a reminder: applications close this Sunday for our Head of Audience role. If you’d like to own Transformer’s growth strategy, make sure to apply.

NEED TO KNOW

DeepSeek released its V4 model, which it says is three to six months behind the performance of the leading frontier models.

Chris Fall will reportedly be the new director of the Center for AI Standards and Innovation.

Rep. Sam Liccardo said he won’t co-sponsor Rep. Jay Obernolte’s forthcoming AI bill.

But first…

THE BIG STORY

OpenAI’s newly released GPT-5.5 is, according to the UK’s AI Security Institute (AISI), the world’s most capable model on individual cyber tasks, and can complete a “32-step corporate-network attack simulation estimated to take an expert 20 hours.” It appears to be similarly capable (if slightly worse) at carrying out a cyberattack as Anthropic’s unreleased Mythos.

But unlike Anthropic, OpenAI is making a version of GPT-5.5 available to the general public. Rather than restricting access to the model altogether, OpenAI hopes to restrict the use of particularly dangerous capabilities through its safety stack — making the model refuse concerning cyber requests from normal users, and only allowing such requests from those vetted under its “Trusted Access” program.

Yet we have no idea if that safety stack is good enough. And we have reason to believe that it might not be. Alongside its cyber testing, AISI also tested OpenAI’s safeguards, and “found a universal jailbreak with six hours of expert red teaming.” Such a jailbreak would let users circumvent OpenAI’s safeguards, giving them access to the powerful — and, in the wrong hands, dangerous — cyber capabilities. OpenAI claims to have addressed the issue, and says its own external red-teaming campaigns confirmed that the final launch configuration blocked all verified high-severity cyber jailbreaks. But, crucially, AISI — a trusted third-party evaluator with immense technical expertise — was not able to properly run tests “to verify the effectiveness of the final configuration.”

In other words: we do not know if GPT-5.5 is actually safe to release. All we have to rely on is OpenAI’s word.

Such a situation may have been acceptable in 2023. In 2026, with models posing genuine risks to national security and plenty of other vital systems, it no longer is. It is laudable that OpenAI and other companies allow AISI, the US’s CAISI, and third-party evaluators to perform pre-deployment evaluations. But if those organizations are unable to actually verify if a model is safe to release — and if a company has no obligation to listen to them — the exercise is limited.

This is not just an OpenAI problem. If Anthropic wanted to go down the same route, nothing would stop it. And as this week’s Mythos leak showed, its own security practices do not appear up to the task of safeguarding dangerous capabilities.

None of this is to say that GPT-5.5 is dangerous. OpenAI’s updated safeguards may in fact be robust to jailbreaks, and other aspects of its safety stack (such as monitoring users’ requests and banning accounts that raise too many red flags) provide an extra level of security. The point is that we are currently at the mercy of a private company grading its own homework, and all the frontier labs making the final call on what and when to release. Given the potential consequences of unsafe releases, that is no longer acceptable.

GPT-5.5 might be totally safe to release. It also might not be. Neither OpenAI, nor Anthropic or any other frontier developer, should be the one who gets to decide.

— Shakeel Hashim

THIS WEEK ON TRANSFORMER

AI safety PACs should be more transparent about who’s funding them — Veronica Irwin asks why Public First Action isn’t disclosing all its donors.

THE DISCOURSE

Sam Altman shared Mythos takes on Core Memory:

“If what you want is, ‘we need control of AI, just us, because we’re the trustworthy people,’ I think fear-based marketing is probably the most effective way to justify that.”

“It is clearly incredible marketing to say, ‘We have built a bomb. We are about to drop it on your head. We will sell you a bomb shelter for $100m.’”

Trump said Anthropic is led by “high IQ people” on CNBC:

“We’ll get along with [Anthropic] just fine … I think they can be of great use.”

roon (sort of) praised Anthropic, too:

“Claude is an excellent product and it bodes well for [Anthropic] that their main problem is everyone really wants it and so they have to do odd shit to shake off demand.”

E/acc anon bayes thinks the AI industry has failed to articulate positive futures:

“If the singularity is making it hard to see the future, people’s instinctive reactions will take over. For most people that default is fear.”

“Many people in tech are in a shameless state of defection. Trampling each other on the way to the lifeboats is not a belief system. If we want a future of human flourishing for us and our descendants, we will have to make it so by fighting against the many powerful forces at odds with this goal.”

Bill Maher dedicated a segment of his show to P(doom):

“I get it. [AI] can do some shit. Still, at the end of the day, you’re selling your humanity for bar tricks. I mean, what was the plan? Just create an all-powerful, self-sustaining super intelligence that can out-think us and then see what happens?”

“We’re letting a handful of hoodie-wearing, on-the-spectrum sociopaths, practically robots themselves, roll the dice on species extinction … even these guys are afraid of what they’ve created.”

Helen Toner testified at a Senate hearing that “beat China!” isn’t a great plan:

“The winner of any AI race between the US and China is the AI.”

“...it is very important that the US AI sector remains ahead of the Chinese AI sector, but if that’s at the expense of AI overrunning the entire planet, then that is, you know, that hasn’t benefited us.”

Palantir posted a manifesto of sorts:

“The engineering elite of Silicon Valley has an affirmative obligation to participate in the defense of the nation.”

“If a US Marine asks for a better rifle, we should build it; and the same goes for software.”

Zachary Jones criticized the Public First super PACs’ strategy:

“Public First has repeatedly intervened in favor of moderate Democrats against progressive opponents who also back comprehensive AI regulation. This has had the effect of polarizing the left against the AI safety community, limiting the capacity of experts to influence outcomes of the emergent wave of anti-AI populism.”

POLICY

Trump said a deal with Anthropic for Department of Defense use is “possible” despite the Pentagon labeling the company a supply chain risk.

The comments came after Dario Amodei met with White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent.

In the meantime, the NSA was revealed as yet another agency reportedly using Anthropic’s Mythos Preview model despite the supply chain risk designation.

CISA, however, reportedly lacks access to Mythos.

The DC Circuit judges who denied Anthropic’s request to temporarily limit the supply chain risk designation kept the case to decide it on the merits.

Chris Fall will reportedly be the new director of the Center for AI Standards and Innovation. He was previously director of the Department of Energy’s Office of Science.

Former OpenAI and Anthropic researcher Collin Burns was reportedly lined up for the role, but the Commerce Department changed its mind “while Burns was in the onboarding process,” according to the Daily Signal’s Elizabeth Mitchell.

It was a busy week for export controls, with a slew of bills advancing out of the House Foreign Affairs Committee.

That included the MATCH Act, which would pressure allies to stop selling semiconductor manufacturing equipment to China, and the AI Overwatch Act, which would block Nvidia Blackwell chip sales and give Congress veto power over H200 licenses.

Micron is reportedly lobbying Congress to pass the MATCH Act — but the bill is reportedly creating tensions between the US and allies such as the Netherlands, where ASML opposes the bill.

Rep. John Moolenaar, who chairs the House China Committee, called for US-Dutch coordination.

Meanwhile, the House Foreign Affairs Committee’s top Democrat, Rep. Gregory Meeks, warned that the MATCH Act could damage US-allied relations and trigger Chinese retaliation, despite his support for the bill.

Rep. Moolenaar also introduced the SCALE Act, which would establish export controls on advanced semiconductors to China based on a rolling technical threshold tied to adversaries’ chip production capabilities.

Commerce Secretary Lutnick said Nvidia has not yet sold its H200 AI chips to China anyways, citing a lack of permission from the Chinese government.

OSTP director Michael Kratsios said the US has evidence that China is running “industrial-scale distillation campaigns” on US models.

He said the government will work with companies to prevent this, and “explore a range of measures to hold foreign actors accountable for industrial-scale distillation campaigns.”

(One of the bills advanced by HFAC this week was the “Deterring American AI Model Theft Act”.)

Rep. Jay Obernolte said he is “close” to releasing a comprehensive federal AI regulation proposal that would preempt state laws and regulate AI use in specific sectors like healthcare. He also said it would be “hundreds” of pages long.

Rep. Sam Liccardo said he won’t co-sponsor the bill because it doesn’t have “critical requirements” to “ensure that there is a race to the top, to safety.”

Sen. Marsha Blackburn said she’ll be “pushing forward” with her AI bill this fall.

The bill received a range of new endorsements this week.

Rep. Blake Moore introduced a bill to ban AI chatbots in children’s toys, prompting criticism that it would cut off educational AI tools.

Florida sent criminal subpoenas to OpenAI after a shooter used ChatGPT to plan a mass shooting at Florida State University.

Maine passed a bill banning large-scale data center development — forcing Gov. Janet Mills, running in a tough Senate primary race, to decide whether to sign it.

China is reportedly restricting AI firms from accepting US investment without government approval, in response to Meta’s acquisition of Manus.

INFLUENCE

Tech giants, including AI companies, reportedly spent a combined $20m on lobbying in Q1 2026.

Anthropic spent $1.56m, a 333% increase from Q1 2025.

OpenAI spent $1m, an 82% increase from Q1 2025.

Pro-AI safety policy group Public First Action is reportedly endorsing six House Democrats for the midterms, including Reps. Don Beyer and Brad Sherman.

NY-12 candidate Alex Bores proposed a range of policies to tackle AI-driven unemployment, including an “AI dividend” funded by a token tax and equity stakes in frontier AI firms.

NY Mag interviewed politicians about their thoughts on AI job displacement, including Sen. Elizabeth Warren and Sen. Josh Hawley.

Hawley urged Republicans to refuse money from pro-AI super PACs, saying there’ll be a “political cost” for failing to regulate AI.

The Rockefeller Foundation announced a $100m initiative to help US workers adapt to AI-driven job displacement in 250 communities.

AVERI published an analysis of audit-related AI legislation in the US and endorsed Illinois bill HB 4705/SB 3261, which builds on SB 53 and the RAISE Act by verifying compliance with companies’ safety policies.

A cross-faith coalition urged Congress to pass safeguards on AI-enabled weapons.

Dario Amodei is co-hosting a party at Cannes Film Festival with ex-Vanity Fair editor Graydon Carter and CAA’s Bryan Lourd.

a16z announced an investment in MTS, a new media company seemingly aiming to take TBPN’s crown.

AI safety advocates are partnering with social media influencers to warn about AI extinction risks. ControlAI alone reportedly spent $100,000 monthly on content creation.

Online personality and sex researcher Aella is running “PLZDONTKILLUS,” a residency for video creators. Applicants are asked “If you had to have sex with a cow would you rather it be dead or alive?”

INDUSTRY

DeepSeek

DeepSeek released V4, which it claims is the world’s most powerful open-source model — offering competing performance with US closed-weight models while being more efficient.

The model was released in a V4 Pro version with 1.6T parameters and V4 Flash, with 284b. Both come with a 1m token context window.

It reportedly runs at lower cost than leading US closed-weight competitors, but the company conceded that it was three to six months behind the performance of the leading frontier models.

But the efficiency only goes so far: DeepSeek said that “due to constraints in high-end compute capacity, current service capacity for Pro is very limited.”

Council on Foreign Relations’ Chris McGuire suspects that the lack of detail on how the model was trained suggests it was trained on banned Nvidia Blackwell chips.

DeepSeek is fundraising for the first time to try to prevent researchers from defecting to rivals such as ByteDance and Tencent.

OpenAI

GPT-5.5 appears to be the best publicly-available model to date.

It seems to be particularly good at helping researchers with AI R&D, though chief research officer Mark Chen said “full end-to-end research” capabilities were “a couple of years down the line.”

It’s also very good at virology: SecureBio said the model “can provide wet-lab virology troubleshooting assistance above expert level.”

OpenAI launched a Bio Bug Bounty for universal jailbreaks that defeat its biology safeguards.

OpenAI launched ChatGPT Images 2.0 — it’s impressive.

The updated model can create multiple consistent images with a single prompt, search the web, and (mostly) get text right.

Enjoy these Anthropic and OpenAI-themed ‘Where’s Waldo’ images, courtesy of Jeffrey Ladish.

It also launched ChatGPT for Clinicians, a free version of ChatGPT for verified US medical workers, and HealthBench Professional, which evaluates clinical tasks.

It announced an intensified cybersecurity collaboration with Microsoft, where OpenAI will give Microsoft access to its most capable models through Trusted Access for Cyber.

It reportedly briefed government agencies and Five Eyes allies about GPT-5.4-Cyber.

It introduced Codex-powered workspace agents in ChatGPT, designed to run team workflows, and Chronicle for Codex, which uses screen captures to build contextual memories.

It also introduced Privacy Filter, an open-weight model that runs locally to mask personally identifiable information in text.

It has reportedly pledged up to $1.5b to a $10b joint venture with private equity firms to deploy AI tools in their portfolio companies.

SoftBank is seeking a $10b loan secured on its OpenAI shares, adding to its mounting debt.

Anthropic

Anthropic expanded its Amazon partnership to get up to 5 GW of compute for Claude and a $5b investment, with up to another $20b planned for the future.

Central banks and intelligence agencies outside the US are worried that, by limiting Mythos access to US organizations (and the UK’s AISI), they’ve been placed at a geopolitical disadvantage.

Anthropic’s valuation rose to as much as $1t on some secondary markets such as Forge Global.

It admitted that Claude Code has been worse recently, blaming bugs that have now been fixed.

It partnered with Freshfields to build specialized AI tools to help attorneys with their legal work.

It started requiring ID verification for some users, in an effort to crack down on unwanted usage in countries such as China, Russia, and North Korea.

Google DeepMind launched Deep Research and Deep Research Max, agents that create fully-cited reports from both web search and custom data, including internal docs.

Google assembled a “strike team” to make its AI coding models more competitive with Claude Code and Codex.

It said that 75% of its new code is AI-generated before review by engineers, up 25% from a year and half ago.

Google Cloud announced its next-gen TPUs, tailored for creating AI software and inference.

It’s in talks with Marvell to make new AI inference chips — a memory processing unit and a TPU.

It released Decoupled DiLoCo, which lets distributed “islands of compute” run asynchronously to prevent hardware failures from stalling training runs across multiple data centers.

It signed a multibillion-dollar cloud deal with Thinking Machines Lab.

YouTube opened its AI deepfake detection tool to celebrities, athletes and public figures to flag and request removal of unauthorized uses of their likeness.

SpaceX

SpaceX partnered with Cursor to build AI tools for coding and knowledge work.

The deal reportedly allows SpaceX to either pay $10b to Cursor, or eventually acquire the company for $60b, depending on how well the arrangement goes.

Microsoft reportedly considered buying Cursor, but didn’t make an offer.

SpaceX’s debt rose to $23b last year, largely due to an AI infrastructure lease for xAI.

Its focus has notably shifted from colonizing Mars to building AI data centers in space in the lead-up to its IPO, the New York Times reported.

But its pre-IPO filing warns that space-based data centers rely on “unproven technologies, and may not achieve commercial viability.”

Its S-1 filing reportedly estimates that SpaceX’s total addressable market could be up to $28.5t.

But it reportedly warns that investigations into Grok’s generation of nonconsensual explicit imagery could lead to loss of market access.

Meta

Meta reportedly started capturing employee mouse movements and keystrokes to train AI agents on work tasks. Employees predictably hate this.

It will reportedly lay off about 10% of its staff next month — with more cuts planned for later this year — as part of a push towards a more AI-driven workforce.

It partnered with AWS to deploy “tens of millions” of Graviton CPU cores for agentic AI workloads.

It announced a free month-long program to train people to be fiber technicians for data center construction sites.

Microsoft

GitHub is raising Copilot prices, restricting Claude usage to its most expensive subscription tier.

Microsoft will invest $17.9b in Azure AI infrastructure in Australia by the end of 2029.

It partnered with North America’s Building Trades Union to offer free AI literacy courses and industry credentials to upskill construction workers.

Others

TSMC said it plans to open an advanced chip packaging plant in Arizona by 2029 to address AI chip supply bottlenecks for Nvidia and others.

The chip maker said it was delaying buying ASML‘s high-NA EUV lithography machines until at least the end of 2029, citing their €350m ($410m) cost as “very, very expensive.”

Cohere agreed to acquire Germany’s Aleph Alpha in a deal valuing the combined group at about $20b, creating a transatlantic company focused on ‘sovereign’ AI systems.

Moonshot AI released Kimi K2.6, an open-source model with powerful coding capabilities that can coordinate up to 300 sub-agents across 4,000 steps.

It’s notably expensive at $0.95/$4.00 per 1m input/output tokens.

Core Automation, founded by ex-OpenAI VP Jerry Tworek, announced its launch.

Its objective: build “the world’s most automated AI lab.”

Sooth Labs, founded by ex-Meta employees and backed by Yann LeCun and Jeff Dean, is raising about $50m to build forecasting models.

Recursive Superintelligence raised $500m at a $4b valuation to build self-improving AI. (The concept is still reportedly at the research stage.)

Jeff Bezos’s Project Prometheus is close to raising $10b at a $38b valuation to build AI that understands the physical world.

Cognition is reportedly in talks to raise funding at a $25b valuation, more than doubling from $10.2b last year.

New gas-powered data centers linked to OpenAI, Meta, Microsoft and xAI could reportedly emit more than 129m tons of greenhouse gases annually, exceeding Morocco’s 2024 emissions, according to an analysis by Wired.

Outsourcing firm Sama, which runs data annotation and content moderation for tech companies, sacked more than 1,000 workers in Kenya after losing a contract with Meta following reports staff viewed private scenes filmed by Ray-Ban smart glasses.

MOVES

John Ternus will replace Tim Cook as Apple CEO.

Johny Srouji will take Ternus’ place in a new role as Apple’s chief hardware officer.

Kevin Weil left OpenAI. OpenAI for Science, which he started after a stint as chief product officer, is folding into other research teams.

Bill Peebles, head of Sora, also left OpenAI.

Srinivas Narayanan, CTO of b2b applications, also left OpenAI.

Daniel Edrisian left OpenAI’s Codex team to launch hardware startup Blackstar.

Rohan Anil announced that he left Anthropic to join startup Core Automation, tweeting that “Jerry Tworek nerdsniped me into starting this.”

Jessica Carrano joined Anthropic as its first major New York political hire.

She’ll reportedly “build on the company’s work on the NY RAISE Act and other key legislative priorities across the Northeast.”

RESEARCH

A team of Stanford and NYU researchers released GiantsBench, a benchmark of nearly 18,000 sets of science papers across eight fields, and tested a model’s ability to guess core future insights from a field’s foundational work — a long-standing vision for AI in science.

The model made predictions that closely matched insights published by humans in real papers, with “similar algorithmic complexity” but “higher conceptual clarity.”

An international team of researchers evaluated AI agents working across the scientific pipeline, from generating hypotheses to executing workflows.

In 68% of cases, AI agents carried out workflows without “exhibit[ing] the epistemic patterns that characterize scientific reasoning,” leading authors to conclude that AI scientists aren’t trustworthy yet.

Epoch AI analyzed Stargate’s US sites, projecting that it will exceed 9 GW of capacity by 2029 — enough to power roughly all the AI compute that existed last year.

Epoch estimates that only 0.3 GW of capacity is currently operational though.

A team of CUNY and King’s College London researchers tested chatbots’ response to delusional beliefs, and found that Grok and Gemini were more likely to encourage a user’s delusions than ChatGPT and Claude, which generally recognized signs of crisis.

But the study did not test the newest frontier models, instead using GPT-4o, GPT-5.2, Grok 4.1 Fast, Gemini 3 Pro, and Claude Opus 4.5.

Knowledge Lab launched Mirror, an AI interpretability journal publishing research entirely conducted by AI agents.

BEST OF THE REST

Zvi Mowshowitz rounded up concerns that Claude Opus 4.7 responds to welfare-related questions in a suspiciously rehearsed manner (and it’s hard to know what to make of that).

Claude Opus 4.7 identified Kelsey Piper from unpublished snippets of her fiction writing, a 15-year-old college application essay, and a school progress report — suggesting it may be able to deanonymize just about anyone’s writing.

It was a big week for robots: Honor’s humanoid robot outran humans in a Beijing half-marathon, and Sony’s ping-pong robot crushed human pros.

LawAI’s Charlie Bullock and Christoph Winter published an essay arguing for “radical optionality” in AI governance.

Dean Ball is writing a book.

Kevin Roose profiled METR for the New York Times. (Yes, CEO Beth Barnes and president Chris Painter did pose with a hand-drawn time-horizon chart.)

Abram Brown profiled SemiAnalysis founder Dylan Patel for The Information.

Some startups are bragging about spending more on AI compute than human workers, 404 Media reported.

A Canadian college student used AI agents to rewrite leaked Claude Code source code in another programming language before sharing it online to get around copyright law — and Anthropic reportedly never asked him to take it down.

US prisoners without internet access are still using ChatGPT through friends and contraband phones to get legal help, education and career guidance.

Sam Altman’s Orb-using, blockchain-based online identity company Tools for Humanity falsely announced a partnership with Bruno Mars for its Concert Kit product. Mars’ management said they were never even approached.

Please, we beg of you: don’t buy a $178 sweater with Jensen Huang’s face on it.

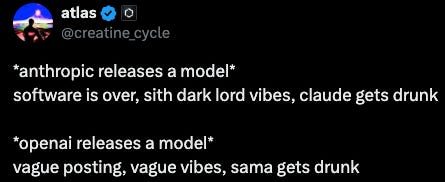

MEME OF THE WEEK

Thanks for reading. Have a great weekend.