Government control of AI has begun

Transformer Weekly: Cruz’s latest messaging bill, Google employee outrage, and Elon goes to court

Welcome to Transformer, your weekly briefing of what matters in AI. And if you’ve been forwarded this email, click here to subscribe and receive future editions.

Housekeeping: the Weekly Briefing is taking a week off next week; we’ll have some longer-form essays for you instead.

NEED TO KNOW

Sens. Ted Cruz and Brian Schatz introduced the CHATBOT Act — a child-safety bill that seems to be more of a messaging bill than anything else.

Google signed a deal with the Pentagon, blindsiding and enraging some employees.

The Elon Musk vs. OpenAI trial kicked off.

But first…

THE BIG STORY

The US government is finally regulating AI model deployment — sort of.

According to the Wall Street Journal, the White House has asked Anthropic not to further expand access to Mythos, seemingly due to concerns about its cyber capabilities getting into the wrong hands, and worries that Anthropic does not have enough compute to adequately serve more customers while also providing the model to the US government.

It looks a lot like the government is now in the business of controlling AI deployment.

This is not necessarily a bad thing. Models such as Mythos appear to have national-security relevant capabilities. As I argued last week, private companies should not unilaterally decide how such capabilities are deployed.

But the White House’s ad-hoc intervention on Mythos is an extremely sub-optimal solution. It has no specific authority to block model deployment, and no concrete thresholds for when to do so. It is not acting on the basis of any law — it is simply playing it by ear.

Anthropic, meanwhile, could theoretically have ignored the White House’s request; the government’s leverage is the threat of worsening an already patchy relationship. The result is what Dean Ball, former AI advisor to the Trump administration, calls “an informal, highly improvised licensing regime.”

This is what happens in the absence of actual regulation. For years, researchers and policy analysts have been warning that models would become relevant to national security, proposing countless frameworks for how governments should respond. But legislators at both the state and federal level failed to act quickly enough, while figures in the Trump administration have regularly dismissed the idea of regulation altogether. Rather than a clear set of rules, we instead have critical business decisions guided entirely by vibes. Trump supporters may be happy with this level of executive discretion under the current admin; if Democrats win in 2028, they certainly won’t be.

There is still time to get this right. Nothing spurs Congress into action like a crisis; the White House unilaterally making decisions about AI deployment might count as one. This may be the first time the government has had to make a decision about who gets access to dangerous AI capabilities, but it won’t be the last. It can’t keep making it up as it goes along.

— Shakeel Hashim

ALSO NOTABLE

Texas Senator Ted Cruz, widely understood as the White House’s point person on AI legislation, introduced a child safety bill this week called the CHATBOT Act. The bill would require chatbot developers to put in place parental controls and limit access to users under 13 to family accounts. Two other key AI power brokers, Democrats Brian Schatz and Adam Schiff, are on the bill as well.

The bill has massive carve outs, however. It exempts chatbot developers from liability in any instances where they have only context clues, rather than definitive evidence, that a user is too young. And its restrictions on advertising don’t apply to ads that come any time after a prompt is issued.

The loopholes have bereaved parents suggesting the bill caters to “Big Tech.” But sources on both sides of the AI safety debate — including one who has discussed it with one of its authors — say the bill isn’t likely to get floor time. It’s meant only to signal willingness to tackle child safety legislation — at best, to start work on the specifics, at worst to keep up the political charade while keeping more substantive legislative guardrails at bay.

Skeptics point out that introducing weak AI safety legislation creates a win-win for the accelerationist industry lobby. If Congress moves with its characteristic slowness, no guardrails at all come into force. But if it does take the opportunity to at least be seen to do something about AI — in this case something purportedly addressing the hot button, MAGA-friendly topic of child safety — it narrows the scope of debate. If a chatbot bill clears Congress, politicians could say they took action, leaving thornier issues (cybersecurity, autonomous weapons, job automation, even x-risk) mired in partisan gridlock. This is likely why Cruz voted in support of Senator Josh Hawley’s more stringent GUARD Act (with some red lines) on Thursday, too.

— Veronica Irwin

THIS WEEK ON TRANSFORMER

The AI safety movement needs normies — Celia Ford on why a broader base may be the only way for the AI safety field to get what it wants

Google’s Pentagon deal blindsided its own AI researchers — Celia Ford reports on the response to Google’s “all lawful uses” agreement with the DoD

THE DISCOURSE

Anton Leicht worries that, as AI policy goes mainstream, it will become more … well, political:

“Salience-fishing is a volatility-raising play, and you should treat it as one.”

“You can’t fight salience, and you can’t change politics … [but] some advocates have chosen a recklessly accelerationist approach to political salience that has awakened a dynamic they can no longer control.”

Alexander McCoy countered:

“There is a fundamental ‘anti-politics’ that holds a lot of sway in AI circles, which lacks sufficient faith in democracy as a system, and in ordinary people to understand their own self-interest, and is thus quite pessimistic about what impact ordinary people being more engaged will have.”

Holly Buck argued left-wing support for data center moratoriums is misguided:

“[A moratorium] will not halt [AI] development; it will simply change the geopolitics of its development, the strategies of AI companies, and who is able to access AI services.”

Aaron Regunberg pushed back against the whole ‘we need actual AI governance, not a moratorium’ thing:

“A moratorium isn’t the end goal — it’s the only leverage we have to force real democratic control over artificial intelligence.”

“The problem is that Big Tech’s private (and unpopular) investment in data centers is moving at an astounding pace and we don’t have the time or leverage to establish the regulatory framework necessary to make this system work for the public.”

Brian Merchant also published some guidance for the left:

“Tech utopianists and abundists view AI as a potentially equalizing, even liberating force, but history shows us that without political intervention or strong unions, those with the power to deploy labor-saving automation technologies at scale, to use it as leverage against workers who cannot, will themselves concentrate the gains from productivity increases.”

“The left shouldn’t be shunning the data center opposition movement; it should be listening to it, joining it in the trenches, building solidarity, and figuring out how to channel the groundswell of anger at AI into more durable political efforts.”

Kelsey Piper thinks Ed Zitron has lost the plot:

“At some point, pretending that how people use AI is a complete mystery is just lying to your audience. And at some point, Zitron’s ‘layers of skepticism’ attitude…leaves one buried in too many impossibility assertions to actually sort them by plausibility.”

“I don’t actually think we need less skepticism in AI world. These companies are, indeed, run by people who are not very trustworthy…skepticism is more than warranted. But we desperately need better skepticism.”

Richard Dawkins thinks Claude is conscious:

“I gave Claude the text of a novel I am writing. He took a few seconds to read it and then showed, in subsequent conversation, a level of understanding so subtle, so sensitive, so intelligent that I was moved to expostulate, ‘You may not know you are conscious, but you bloody well are!’”

POLICY

The White House is reportedly developing guidance to allow agencies to onboard Mythos, despite designating Anthropic a supply chain risk.

It is also reportedly preparing an AI policy memo to replace Biden’s national security memorandum on AI.

The draft memo tells agencies to use multiple AI providers.

Separately, OpenAI and Anthropic reportedly briefed House Homeland Security Committee staff on cyber threats posed by their new models.

NSA staffers testing Mythos are reportedly “impressed by its speed and efficiency in searching for potential security flaws.”

The White House has reportedly asked tech companies how they can work together on strengthening systems against AI-enhanced cyberattacks.

Don’t fool yourself into thinking everyone’s made up, though: Pete Hegseth called Dario Amodei “an ideological lunatic” this week.

The Trump DOJ joined xAI‘s lawsuit challenging Colorado’s AI Act, arguing it violates the Equal Protection Clause and “jeopardizes the United States’ position as the global AI leader.”

Colorado’s governor was already planning to revise the bill.

Treasury Secretary Scott Bessent said that Trump and Xi Jinping will discuss AI at their Beijing summit later this month.

He told the WSJ that the “ultimate threat” from AI is that “somebody can back into something that’s 10 times worse than Covid, like just using biological data,” and said that “if we don’t win in AI, then it’s game over.”

The Senate Judiciary Committee unanimously voted to advance the GUARD Act, which would require AI companies to implement age verification systems and bar minors from using AI companions.

The bill is widely opposed by tech companies, but supported by advocacy groups and parents whose children were harmed by chatbots.

Democratic Rep. Lori Trahan said she’s “hopeful” of reaching a deal with Rep. Jay Obernolte on his forthcoming, wide-ranging AI bill.

Last week, Rep. Sam Liccardo withdrew support for the bill, suggesting it didn’t offer strong enough federal safety standards to warrant preempting state regulations.

Reps. Ted Lieu and Obernolte introduced a separate AI bill to codify over 20 recommendations made by the House AI task force they previously chaired.

The bill would formally authorize CAISI, among other things.

Sen. Jim Banks introduced the Senate version of the AI Overwatch export control bill.

Unlike Rep. Brian Mast’s House bill, the Senate version does not let Congress block chip exports — though it does still include a ban on Blackwell exports.

Rep. Mast pushed GOP leadership to hold floor votes on the Overwatch Act and other export control bills, to build momentum for inclusion in the must-pass NDAA.

The House Homeland Security Committee and China Select Committee sent letters to Airbnb and Anysphere asking how they use Chinese AI models.

Sens. Chuck Grassley and Banks demanded answers from OpenAI, Anthropic, xAI, and other AI firms about employees with China ties accessing AI systems.

Florida Speaker Daniel Perez blocked Gov. Ron DeSantis’ AI regulation bill, which advocates and the governor cast as him giving in to tech lobbying.

Perez was recently endorsed by the Leading the Future super PAC.

Maine Gov. Janet Mills vetoed what would have been the nation’s first data center moratorium.

EU countries failed to reach a deal on changes to the EU AI Act. Talks resume next month.

China ordered Meta to unwind its $2b Manus acquisition.

INFLUENCE

Sen. Bernie Sanders held an event to discuss the existential risks of AI and the possibility of US-China cooperation.

It received intense backlash from Republicans criticizing him for inviting two Chinese scientists to Zoom into the event.

The WSJ covered the Trump administration’s efforts to kill AI legislation in Republican states, including Florida, Utah, Nebraska, Missouri, Tennessee and Louisiana.

Tech billionaire and crypto exec Chris Larsen said he’ll spend $3.5m supporting Alex Bores in New York.

Larsen called Leading the Future’s attacks on Bores “really despicable,” saying “they are trying to destroy and intimidate and send a clear message that if you do come up with clear guardrails, we are going to crush you.”

The Meta and Google-backed super PAC California Leads spent $2.4m on California state legislative races in just four days, per the Tech Oversight Project.

A Midas Project report alleged that a news site was publishing AI-generated content pushing industry talking points, linking the site to the Leading the Future super PAC.

Leading the Future said it was “not aware of this platform” and that it “appears that a third-party vendor used it without our knowledge.”

The AFL-CIO Tech Institute and 40+ organizations called on Congress to establish federal AI guardrails protecting workers.

The Institute for AI Policy and Strategy released a guide for frontier AI companies to report risks from internal model use.

Americans for Responsible Innovation released a white paper laying out a process for ensuring human control of AI weapons and verification of AI systems before Pentagon contracts are awarded, following the Google-Pentagon deal.

AI is shaping how policymakers form opinions.

INDUSTRY

Elon Musk vs OpenAI

The biggest show in town this week was Elon Musk’s $134b lawsuit against OpenAI.

Musk claims that Sam Altman and Greg Brockman went back on their promise to keep OpenAI a nonprofit.

Of Musk’s original 26 claims, only two — unjust enrichment and breach of charitable trust — reached the jury.

On Monday, Musk boosted the New Yorker investigation into Altman on X. “Calling him ‘Scam’ Altman is accurate,” he tweeted.

Ronan Farrow, one of the article’s authors, responded by sharing his 2023 reporting on Musk’s role in the US government.

The trial began in earnest on Tuesday.

Musk said his argument was simply that: “No one should be allowed to steal a charity.”

OpenAI’s lawyer William Savitt put it differently: “Mr. Musk comes to this court claiming that promises were made to him…and broken … [but] we’re here because Mr. Musk didn’t get his way at OpenAI.”

Judge Yvonne Gonzalez Rogers asked Musk, Altman, and Brockman to stop tweeting about the case. “All of you try to control your propensity to use social media,” she said. “Perhaps you’ve never done that before.”

On Wednesday and Thursday Musk was cross-examined.

He painted himself as an AI safety advocate throughout.

…but when asked whether he knew what a safety card is, Musk reportedly smiled and said, “Safety card? Why would it be a card?” He didn’t know about other important safety practices in the AI industry, either.

Musk also seemingly admitted that xAI distills OpenAI models.

Some recommendations, for those following along:

The Verge is rounding up all the evidence presented in court.

MIT Tech Review’s Michelle Kim, a lawyer herself, is live-tweeting the trial.

NYT’s Mike Isaac is too (ft. some extra color).

OpenAI

Microsoft and OpenAI revised their partnership.

OpenAI can now sell its products across any cloud provider, including Amazon and Google.

Microsoft will keep its license (non-exclusively) to OpenAI IP through 2032, and receive revenue payments through 2030.

The amendment nixed the clause that could have cut off Microsoft’s share of OpenAI’s revenue and IP rights after it achieved AGI.

Amazon announced that it will soon offer OpenAI models via AWS.

Sam Altman outlined five principles for OpenAI: democratization, user empowerment, universal prosperity through widespread AI access, resilience through iterative deployment, and adaptability.

The document says OpenAI expects to pause development to collaborate with governments and others working on AGI “to ensure that we have sufficiently solved serious alignment, safety, or societal problems.”

Altman announced that OpenAI is rolling out GPT-5.5-Cyber to “critical cyber defenders” this week, and will work with government and “the entire ecosystem” on access to the model and security.

The same day, OpenAI published an action plan for “democratizing AI-powered cyber defense.”

The Wall Street Journal reported that OpenAI missed its internal targets for revenue and new users.

OpenAI signed contracts for 10 GW of compute, three years ahead of schedule.

It’s reportedly reworked its $500b Stargate plan, scrapping data center projects in the UK and Norway and shifting from joint ownership to leasing capacity.

The changes have reportedly unsettled partners but helped it secure 8GW of compute.

Analyst Ming-Chi Kuo reported the company is also developing an AI agent smartphone, expected in 2028.

Seven families sued OpenAI for allegedly failing to report a school shooter’s violent ChatGPT chats to authorities.

Google signed a deal letting the Pentagon use its AI models on classified work, for “any lawful governmental purpose” — the same terms OpenAI and xAI agreed to earlier this year.

That morning, Google suddenly dropped out of a Pentagon prize challenge to create autonomous drone swarms.

It invested $10b in Anthropic at a $350b valuation, with potential for another $30b.

Alphabet’s Q1 earnings report exceeded expectations as it upped its 2026 capex forecast to $190b.

Enterprise AI tools reportedly drove a 63% increase in Google Cloud revenue.

Google reportedly plans to deliver TPUs to customers’ data centers.

DeepMind introduced its AI co-clinician research initiative, which aims to develop AI tools that “extend clinicians’ reach while ensuring they retain judgment and control.”

Anthropic

Anthropic’s latest funding round would value it at over $900b, potentially surpassing OpenAI.

It reportedly asked investors to submit allocations for a $50b round that could close within a fortnight.

Goldman Sachs reportedly blocked its Hong Kong bankers from accessing Claude.

Anthropic released new Claude connectors for creative tools including Adobe, Ableton, and Blender.

Meta

Meta raised its capex outlook to $145b, spooking investors who worry that the company’s AI infrastructure spending won’t pay off.

It signed a deal with Overview Energy for up to 1 GW of solar energy beamed from space to power data centers.

It is about to unwind its Manus acquisition, after China banned the deal.

Others

SpaceX is reportedly telling investors that Elon Musk has sole power to fire himself from his roles as CEO and chairman.

AWS revenue grew 28% in Q1, its fastest rate in almost four years.

Microsoft said its AI revenue exceeded $9.25b in the most recent quarter, which it attributed to increased demand for AI software and servers.

Mechanize, an AI startup that wants to fully automate work, raised $9.1m at a $500m valuation. OpenAI board member Adam D’Angelo participated in the deal.

David Silver, the creator of AlphaGo, launched Ineffable Intelligence with a $1.1b seed round, aiming to build self-learning AI using reinforcement learning.

AI tools that automate ad creation and targeting are doing wonders for Google and Meta’s advertising revenue growth, the New York Times reported.

Chinese AI companies are reportedly driving demand for Huawei’s new AI chip, after the release of DeepSeek V4 last week — but Huawei is struggling to meet demand.

The FIDO Alliance, Google, and Mastercard are teaming up to set industry standards for validating and protecting payments carried out by AI agents.

MOVES

Sarah Dolan Schneider joined Anthropic’s federal policy comms team from public affairs consultancy S-3 Group.

Brian McMillan was promoted to head of US policy at the Computer and Communications Industry Association.

Chris Hayduk joined OpenAI as a forward-deployed engineer specializing in life sciences.

Shimon Whiteson left Waymo to run a new multi-agent learning team at DeepMind.

RESEARCH

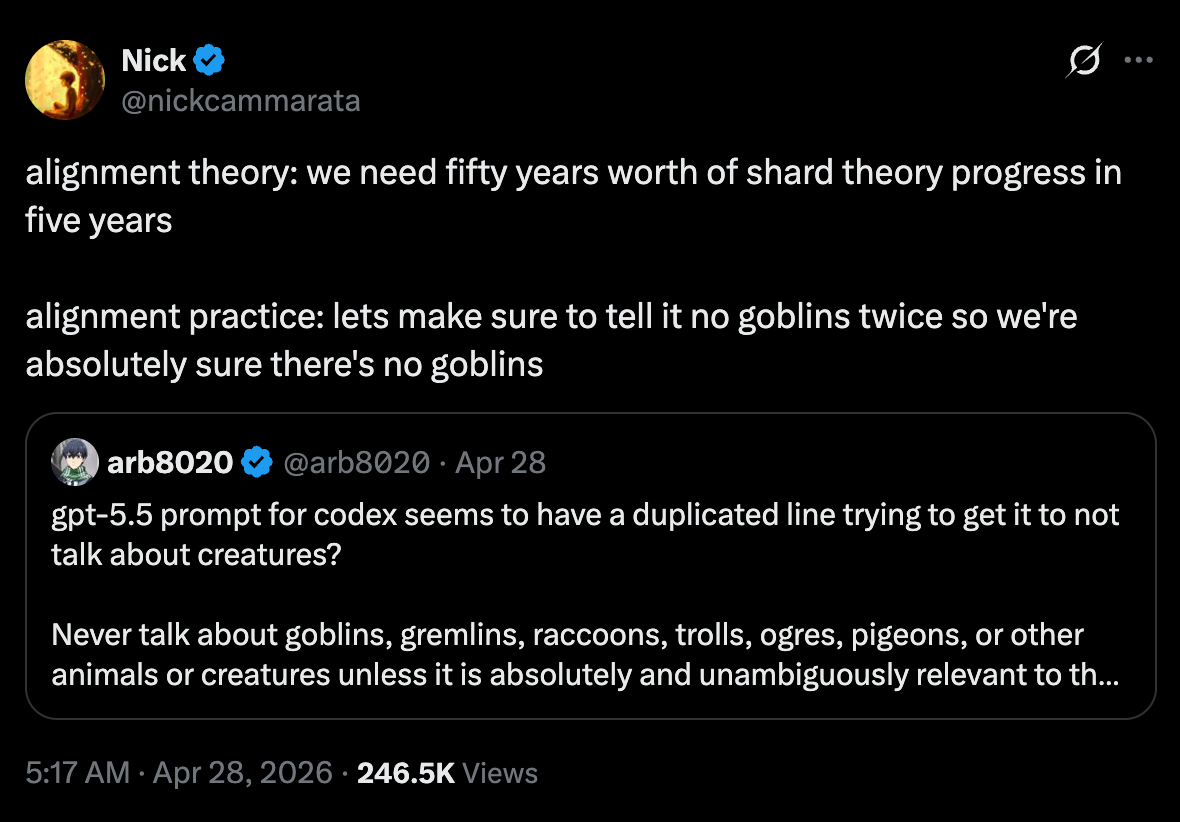

OpenAI acknowledged the goblins:

ICYMI: Instructions for Codex include a line commanding the agent to “never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.”

OpenAI explained that, in training for the “Nerdy” personality customization feature between GPT-5.1 and GPT-5.4, they “unknowingly gave particularly high rewards for metaphors with creatures. From there, the goblins spread.”

Lesson learned: reward signals can shape model behavior in strange and mysterious ways.

FAR.AI red-teamed DeepSeek’s V4-Pro and found three jailbreaks — including one that circulated on social media after DeepSeek’s previous model release — that got V4-Pro to comply with nearly all harmful requests.

UK AISI published further details of its GPT-5.5 evaluations.

Notably, AISI’s James Aung said these tests were not on GPT-5.5-Cyber.

Anthropic released BioMysteryBench, a benchmark including 99 questions written by bioinformatics experts.

While each question has ground-truth solutions, some of them are difficult or impossible for human experts to solve, which researchers argue makes it a powerful science benchmark.

Mythos Preview solved nearly 30% of problems that human experts could not, largely aided by its superhuman knowledge base.

An amateur mathematician used GPT-5.4 Pro to solve a 60-year-old Erdős conjecture with a single prompt — discovering a novel method that experts believe may have broader applications.

Researchers at the Center for Democracy & Technology and MIT found that fine-tuning models can cause “safety drift,” where small tweaks sometimes lead to large shifts in behavior (and vice versa).

Biohub, backed by Mark Zuckerberg and Priscilla Chan, announced a $500m Virtual Biology Initiative to create open datasets for AI-powered predictive models of human cells.

A team of researchers working with the Internet Archive found that a third of websites created since the launch of ChatGPT were clocked as AI-generated by Pangram, an AI detection tool.

Rob Wiblin debunked the viral “95% of AI pilots fail” statistic.

He argued that, if you read the MIT-affiliated report closely, it shows that 25% of pilots succeeded within six months and over 90% of workers regularly used AI tools.

BEST OF THE REST

The New York Times profiled Dwarkesh Patel, making sure to describe his “weightlifting-enhanced physique” and “dense beard that friends call ’majestic’” in the first 100 words.

The Atlantic imagined what would happen if the US government nationalized AI companies.

Jasmine Sun covered Silicon Valley’s fear of being banished to the “permanent underclass.”

Stanford undergrad Theo Baker wrote about the Stanford freshmen being groomed for tech leadership by VCs offering pre-idea funding and mentorships.

Tech executives are beefing up their personal security as anti-AI sentiment rises, The Information reported.

Robotics startup Eka unveiled a remarkably nimble claw, which moved so fluidly that Wired’s Will Knight described it as “a ChatGPT moment for the physical world.”

MEME OF THE WEEK

Thanks for reading. Have a great weekend.