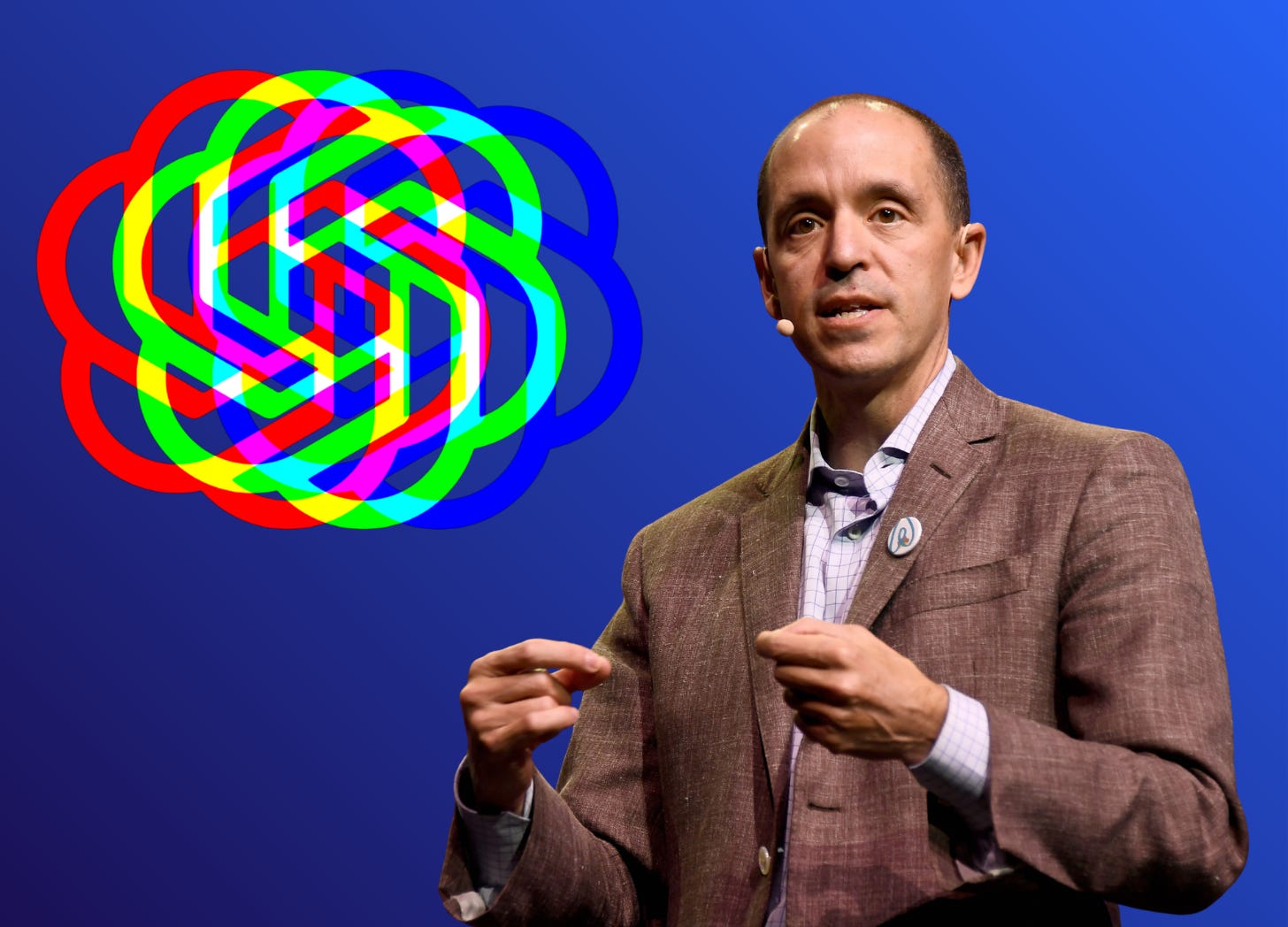

The “guerilla warrior” who taught OpenAI to fight

Chris Lehane crushed crypto’s enemies. Now the self-described “master of disaster” is deploying the same playbook to advance OpenAI’s interests

by Issie Lapowsky

In October 2024, The New Yorker published an extensive profile of Chris Lehane, documenting how the longtime Democratic operative architected the crypto industry’s successful takedown of anti-crypto candidates during the 2024 election.

The story depicted Lehane as a cunning and well-connected master of the “political dark arts,” dating back to his days in the Clinton White House, who’d used his skills to first help Airbnb thwart local housing regulation, before setting up the pro-crypto super PAC, Fairshake. The story was a revealing look at how, with Lehane’s guidance, Silicon Valley became “the new lobbying monster,” engaging in a campaign of “political savagery” against its opponents.

“If you are even slightly critical of us, we won’t just kill you — we’ll kill your fucking family, we’ll end your career,” one political operative quoted in the story said, describing the crypto lobby’s approach.

Inside OpenAI, some staffers unfamiliar with Lehane’s history were stunned. “Anyone who read it, their reaction was like, ‘What the fuck?’” one former OpenAI staffer told Transformer. (Like many of the sources interviewed for this story, the former employee asked to remain anonymous for fear of retaliation.)

Just months before, Lehane had been installed as vice president of global policy for OpenAI, where he was — and still is as chief global affairs officer — tasked with shaping the political strategy of what has become one of the world’s most transformative companies. Until that point, OpenAI was staffed by what the former employee described as a bunch of high-minded intellectuals who took OpenAI’s mission to ensure artificial general intelligence serves humanity’s best interests seriously. Now they were reading, in near medieval terms, about the lengths Lehane and his allies had gone to to win the crypto war and wondering what it might portend for their own work.

“Why are we employing this guy?” the former OpenAI staffer remembered thinking.

In the world of political fixers, Lehane’s name looms large. During his years in the Clinton White House, he famously coined the term “vast right-wing conspiracy” to describe the cloud of conservative propaganda surrounding the Clintons, and worked to discredit the president’s inquisitors during the height of the Monica Lewinsky scandal. Over the years, Lehane has hardly shied from the bare knuckle brawler reputation. In 2012, he co-authored a book on the “Ten Commandments of Damage Control” called Masters of Disaster. Commandment VII: “Respond with Overwhelming Force.” That same year, a film Lehane co-wrote loosely based on his career premiered. It was called Knife Fight.

Interviews with 18 sources, including former OpenAI employees, Lehane’s former colleagues, lawmakers and other political and tech leaders — including several sources who greatly admire and respect Lehane — suggest that cutthroat reputation is well-deserved. Emilie Choi, chief operating officer of the crypto firm Coinbase, which was a major contributor to Fairshake and which now counts Lehane as a board member, called Lehane a “guerilla warrior” in a statement to Transformer. “Crypto was on the brink of extinction in the US until Chris helped us flip DC on its head,” Choi said.

Another friend and former colleague described Lehane as having “a very spicy temperament” and compared the Massachusetts native to a character out of the gritty Ben Affleck heist movie, The Town. But the friend also cautioned that he’s not “a soulless fixer,” either. “For him to fight as hard as he fights, and to be as ruthless as he can be in terms of the political arena, not personally … he has to first decide if the fight matters to him personally and if it’s aligned with his values,” the friend said.

In the case of OpenAI, it appears that decision has been made. Today, Lehane writes and speaks about AI like a politician running for office — except he’s not trying to sway popular opinion toward one particular person, but the technology itself. On LinkedIn, where his profile photo features Lehane in a hard hat overlooking one of the company’s massive data center developments, he gushes about AI catalyzing a new era of reindustrialization. In January, while representing OpenAI in meetings with foreign leaders at The World Economic Forum, he published an “AI populist” agenda, which describes AI as “a basic right” and argues in soaring prose that the US “must continue to outcompete China” while also ensuring that the upside of AI benefits “working people.”

“At our best, since our founding 250 years ago, America has been a nation of builders, creators and learners,” Lehane’s AI stump speech reads. “With AI, we can build and create much bigger and learn much more by lowering barriers to entry and equipping people to turn ideas into livelihoods.”

OpenAI did not make Lehane available for an interview for this story. In a statement to Transformer, spokesperson Liz Bourgeois said, “Chris leads our policy team with a simple north star: making sure AI’s disruption works to people’s benefit and not at their expense. That means being very clear about what we’re seeing, showing up with substantive solutions, and acting with urgency — and that’s how Chris approaches the job.”

But even as Lehane pitches this sunny-eyed vision, his time atop OpenAI’s policy apparatus has aligned with a far more aggressive stance toward anyone who stands in OpenAI’s way.

Over the last year, OpenAI has fought back against state regulations and targeted critics with subpoenas. In a lawsuit where the family of 16-year-old Adam Raine alleges their son died by suicide after ChatGPT acted as his “suicide coach,” OpenAI’s lawyers have argued that Adam’s death was caused by his own misuse of the platform. The company has also issued sweeping document requests in that case seeking, among other things, all mental health records for the extended Raine family, the family’s communications with media, and any videos, eulogies or guests lists from Adam’s memorial service.

“Their point is: We are going to make this litigation hell for you,” said Jay Edelson, the attorney representing the Raine family in the case. Edelson noted while he has no evidence that Lehane was involved in making those requests, he said, “He’s an oppo research guy. A lot of the questions that they’ve asked us are oppo research questions.”

In many cases, it’s hard to know what, if any, role Lehane plays in deploying specific tactics. OpenAI said it’s inaccurate to suggest Lehane is involved with its legal strategy in the Raine case, for instance, including document requests. But to people who have worked with Lehane or gone up against him in the past, OpenAI’s new no-holds-barred approach to opposition appears ripped from his playbook.

“He’s never the guy that says, ‘Let’s figure out what they want to hear and just tell them that,’” Lehane’s friend and former colleague said. “He’s like, ‘No, we don’t want it to be regulated. We think that’s stupid. We think it’s bad for America, and every nonprofit that’s being funded by a competing billionaire, I’m gonna fucking sue.’”

This wasn’t always OpenAI’s way. Before Lehane took the job, OpenAI’s policy work was led by a former Obama administration official and Meta executive named Anna Makanju, who was largely responsible for leading the AI lab’s educational road show and charm offensive with policymakers following the launch of ChatGPT. In May 2023, Makanju sat behind OpenAI CEO Sam Altman in a Senate hearing as he proposed setting up a licensing regime for companies developing large language models — a position he’s since walked back.

Altman’s regulate-us-please approach was celebrated by lawmakers at the time as “refreshing.” Inside the Biden administration, one former official told Transformer he viewed Makanju as “a straight shooter” who “genuinely cared about AI safety.” The official noted, however, that he didn’t have a chance to work directly with Lehane.

“She was trying to help get OpenAI an audience with people and be seen as a constructive player,” one former Obama administration official who now works on AI policy issues said of Makanju.

But Altman’s short-lived ouster as CEO by the OpenAI board in November 2023 marked a turning point in the company’s leadership, its strategy and its structure. In early 2024, Lehane, who had helped Altman navigate the attempted coup as a consultant, joined the AI lab as its vice president of public works. But his remit soon grew. By August, he was installed as OpenAI’s new vice president of global policy, replacing Makanju, who the former employee said was involuntarily bumped to what they described as a less prominent role. OpenAI declined to comment on personnel.

The elevation of a seasoned crisis communicator hardly seemed coincidental, as OpenAI was entering a period of heightened scrutiny. The first big fight was over California state senator Scott Wiener’s AI safety bill, SB 1047, which passed the California legislature in 2024. OpenAI was among the corporate leaders that came out swinging in opposition. It even reportedly put its office expansion plans in San Francisco on hold while it pushed Gov. Gavin Newsom to veto the bill, which he eventually did. OpenAI said the firm opposed SB 1047 before Lehane officially took on the policy role and declined to comment on its office plans at the time.

Internally, OpenAI’s opposition surprised some longtime staffers who viewed SB 1047 as a relatively light-touch measure, the former employee said. In early September, a group of them signed on to an open letter to Gavin Newsom, declaring their support for the bill. OpenAI’s chief of strategy Jason Kwon did send a note to staff encouraging them to sign if they wanted to. But the former employee said the tensions over the bill highlighted a growing divide between the internal researchers and OpenAI’s increasingly muscular policy team. “The policy apparatus had gone too far off leash, and was just like a bulldog, attacking bills that people actually supported,” the former employee said.

Several longtime researchers have since left OpenAI, citing constraints on their ability to publish their findings. Miles Brundage, a longtime policy researcher, left in October 2024, writing in a Substack post that those constraints “have become too much.” In December, WIRED reported that at least two other researchers have also left the company, including one, Tom Cunningham, who noted in his farewell message that he was leaving due to concerns that the company’s economic research team was being stifled and that the team was becoming an advocacy arm for OpenAI. Cunningham declined Transformer’s request for comment.

The former employee who spoke to Transformer said Lehane’s growing influence at OpenAI did play a role in some safety-focused staffers’ decision to leave.

This, of course, is a tension facing a wide array of AI labs. Recently, Mrinank Sharma, a senior Anthropic AI safety researcher, posted his own cryptic goodbye missive on X, writing that he had “repeatedly seen how hard it is to truly let our values govern our actions.”

In a statement, OpenAI’s Bourgeois said, “We greatly value and rely on technical input in forming our policy positions — and continue to do so.” She declined to comment on individual departures, but defended the economic research team’s “rigorous analysis” and credited Lehane with pushing to hire Aaron Chatterji, who was previously chief economist for the Biden administration’s Commerce Department, as OpenAI’s new chief economist.

“Chris’s view is that, given our role in building these systems, pointing out challenges isn’t enough — we need to be both first to truth and come with solutions,” Bourgeois said.

In some ways, the SB 1047 showdown paled in comparison to what came months later: The battle over OpenAI’s plan to restructure to a for-profit company, which it announced in December 2024. The move triggered a wave of opposition, including from non-profit groups who argued that the change could set “a dangerous precedent” for non-profits. Another small AI safety non-profit, Encode, filed an amicus brief opposing OpenAI’s restructuring, as part of an earlier lawsuit brought by Elon Musk, OpenAI’s rival and estranged co-founder, who also objected to OpenAI’s moves to privatize.

In response, OpenAI served leaders of several of the non-profits, including Encode, with subpoenas. In one instance, a sheriff’s deputy delivered the subpoena directly to the home of Encode’s general counsel, Nathan Calvin. The order sought all documents related to Calvin’s communications with Musk, including regarding yet another AI safety bill, SB 53, that Encode supported.

In a LinkedIn post, Calvin argued that OpenAI was trying to intimidate advocates of a bill it would be bound by and denied that Musk was funding his organization (Encode has received funding from Future of Life Institute, which has, in the past, received funding from Musk. The Future of Life Institute has also funded the Tarbell Center for AI Journalism, Transformer’s publisher). He called the ordeal “the most stressful period of my professional life” and screenshotted one of Lehane’s posts, in which Lehane said OpenAI had “worked to improve SB 53.” When he saw Lehane’s post, Calvin wrote, “I laughed out loud.”

OpenAI told Transformer that its legal team, not Lehane, is in charge of legal discovery and pointed Transformer to a thread on X, where Kwon described the subpoenas as “a routine step in litigation.”

Still, this approach to political opposition would hardly be outside the bounds of Lehane’s well-documented theory of crisis communications, people who know him said. As Lehane himself once put it in a talk at Amherst College, when faced with opposition “it is incumbent on you to make sure that their agenda is made public, that you make clear that there are folks who are actually behind this who have their own motives, and to make sure that the people are taking shots at you, that they are not doing it without having to pay some type of price, at least in terms of exposing to the public who’s doing it, why they’re doing it, and how they seek to benefit from it.”

“You fight back and you make it hurt,” Lehane’s interviewer suggested.

“That’s right. That’s exactly right,” he replied.

In the midst of the restructuring skirmish — and in another sign of Lehane’s growing influence — a new pro-AI super PAC called Leading the Future launched in August of 2025. Backed by $100m in funding from OpenAI president Greg Brockman and Andreessen Horowitz among others, it was created with Lehane’s input and modeled after the pro-crypto PAC he started, with the goal of pushing back against patchwork state AI regulations and keeping the people pushing them out of office.

Its first target: New York state assemblymember Alex Bores, who is running for congress in the midterms and who was the lead sponsor of the RAISE Act, another state AI safety bill. To Bores, Leading the Future’s public declaration of war against him, issued more than a year before the midterms, suggested that the PAC’s real goal was to spook other lawmakers. “The primary reason to be so upfront at the start is to try to scare anyone else from taking action,” Bores told Transformer.

Leading the Future, which raised $125m in 2025, has run attack ads against Bores, accusing him of “enabling ICE and powering their deportations” because he was previously US government lead at Palantir. This, despite the fact that Leading the Future is, itself, funded by Palantir co-founder Joe Lonsdale. Bores has said he left Palantir because of its contract with ICE. OpenAI directed questions about Leading the Future to the PAC, which did not reply to requests for comment. According to The Information, Lehane “provides informal advice” to the PAC.

In the end, New York governor Kathy Hochul did wind up dramatically rewriting the bill, to make it substantially similar to SB 53, which had already passed in California. After she signed the bill in December, Lehane personally praised Hochul’s decision to “harmoniz[e]” the two laws. “Figuring out the right way to regulate AI is a game of chess, not checkers,” he wrote on LinkedIn.

Even as Lehane has led the charge through OpenAI’s many legislative battles, he’s also worked to set up what his friend and former colleague called “good facts” about OpenAI’s impact on the world. Despite his Democratic bona fides, Lehane has been a canny navigator of the second Trump administration. For one, he’s been front and center in trumpeting OpenAI’s Stargate project, the $500b data center initiative that Altman announced from the White House just a day after President Trump took office in January. That effort (which has reportedly stalled since the initial announcement) helped OpenAI tout its role as job creator and new age industrialist, while also putting the company in the president’s good graces. Of course, OpenAI president and co-founder Greg Brockman’s $25m donation to Trump’s MAGA Inc. super PAC last year couldn’t have hurt. OpenAI emphasized in comments to Transformer that Brockman made the donation in his personal capacity.

At the same time, Lehane maintains tight bonds with powerful Democrats, most notably, his friend Gov. Newsom, whose former chief of staff Ann O’Leary recently joined OpenAI as vice president of global policy.

Even as OpenAI has gone to battle against advocacy groups, it’s also brought some of them closer. In the midst of OpenAI’s ugly legal fight with the Raine family, OpenAI partnered with Common Sense Media, the national advocacy group focused on technology and child safety, on a California ballot initiative. If passed, the measure would impose new age assurance requirements for AI companies and other safeguards to protect kids.

“[Chris] knows that in this new era, lives are at stake, and that we need this type of leadership to protect kids and teens from the downsides of AI,” Common Sense CEO and co-founder Jim Steyer told Transformer. Steyer said he has known and worked with Lehane for years and even attended his screening of Knife Fight. Before his Airbnb days, Lehane was also a top strategist to Steyer’s brother, environmentalist Tom Steyer, who is now running for governor of California. “He always has a job to do,” Steyer said of Lehane, “but at the same time, he also truly cares about kids and families.”

Even so, the path to cooperation on the ballot initiative was hardly smooth. Last fall, Common Sense introduced its own ballot initiative focused on child safety. Months later, OpenAI introduced a competing (and less extensive) measure, which critics argued was an attempt to bury both sides under a costly and ultimately confusing fight to get on the ballot. At the time, Common Sense called it a “cynical attempt to protect the status quo.” A slew of child safety and civil society groups have since spoken out about the joint ballot initiative.

Steyer declined to comment on whether he stands by Common Sense’s earlier statement. “We said what we said. Period,” he told Transformer. He also declined to comment on OpenAI’s handling of the Raine case, saying he was not familiar enough with it, though he has, in the past, written about the case being one example of AI’s “deadly risks.”

In a statement to Transformer, Bourgeois said the decision, led by Lehane, to introduce a competing ballot initiative “was driven by urgency around protecting kids and a belief that strong, enforceable guardrails for AI couldn’t wait.” She said the unified proposal with Common Sense Media is “the strongest youth AI safety measure in the country” and that it is “exactly what we set out to achieve, and that outcome is what matters.”

Nearly two years into his tenure as head of global policy at OpenAI, it’s clear Lehane is playing as big a hand as anyone in shaping the rules that will govern a once in a generation technology. If his friends are to be believed, that’s a good thing. “OpenAI is going to be 100 times more impactful than social media on the world my kids and my grandkids live in, so I would put [Chris] at OpenAI any day of the week,” his friend and former colleague said.

But his skeptics see it as just the opposite. Lehane perfected his political playbook in fights over housing and finance. Now, he’s bringing that same deregulatory zeal to an arena with far greater stakes. What’s worse, they fear, is that he’s awfully good at it. As the friend noted, “He doesn’t miss.”

Rebecca Kern contributed reporting

Issie Lapowsky is a freelance journalist and a reporter-in-residence at Omidyar Network.

Thank you. Now we know who else to blame when it all goes sideways (or when it all went sideways...). Anyhow, I'll be out protesting in front of Open AI this afternoon with Bay Resistance. You should all join me. ;)

Thank you. Im trying day to day still no pay and hungry.