The end of voluntary pauses

Transformer Weekly: Anthropic vs the Pentagon, OpenAI raises $110b, and Trump’s power pledge

Welcome to Transformer, your weekly briefing of what matters in AI. And if you’ve been forwarded this email, click here to subscribe and receive future editions.

NEED TO KNOW

Pentagon CTO Emil Michael called Dario Amodei “a liar [with] a God-complex” after Anthropic refused the Pentagon’s demands to use Claude for “all lawful uses.”

OpenAI raised $110b at a $730b valuation.

AI companies and hyperscalers are set to sign a White House pledge to “build, bring, or buy their own power supply for new AI data centers.”

But first…

THE BIG STORY

This week has been dominated by headlines about Anthropic’s principled — and admirable — fight with the Pentagon. (More on the latest developments there below.) But buried among the news was a striking change in the company’s overhauled responsible scaling policy: it no longer promises to pause development if it thinks its models are unsafe.

“The general nature of the initial RSP was in terms of unilateral commitments,” Anthropic co-founder, chief scientist, and responsible scaling officer Jared Kaplan told me.

“We had hoped that this would be something that might lead to some kind of international treaty or legislation,” he said, but “that hasn’t materialized as of yet.”

In a post explaining the rationale behind the changes, Holden Karnofsky (one of the originators of the RSP concept) said that the previous commitments were creating pressure to underplay model capability on internal risk assessments, for not much safety benefit. The ambitious nature of the goals, meanwhile, was making it hard to achieve them: “If I’m running a 5K, I get a faster overall time if I’m trying to set a personal record than if I’m trying to set a world record,” Karnofsky wrote.

Anthropic is positioning the change as broadly harmless. If other companies are releasing dangerous models, the logic goes, the world isn’t made much safer by Anthropic withholding its own. (The company promises that if it has a substantial lead over its rivals, it will hold itself to its original commitments.)

In fact, Kaplan argued, it’s better for the world if Anthropic remains at the frontier, which allows it to conduct empirical safety research and gives it a platform to call for regulation. “If we simply stopped training frontier models while other organizations went ahead,” he said, “I don’t think we’d have any real credibility to talk about risks at all.”

On its own terms, the logic makes sense. In a world in which OpenAI is willing to release something powerful and dangerous, Anthropic isn’t actually making things worse by releasing its own.

But the change is disquieting nonetheless. The original RSP articulated a clear principle that should guide all AI development: we will not build what we cannot confidently make safe. That principle is dead, and what remains is an admission that voluntary commitments simply won’t work.

Throughout the new policy, Anthropic says that it “cannot” unilaterally commit to certain practices. This is playing word games — “will not” is more accurate. It is also ironic that just as Anthropic is taking a stand against the DoD that its peers seem unwilling to match, it is gutting one of the principles to safe development that made it stand out.

Voluntary pause commitments were supposed to create the political capital for governments to step in and force all companies to meet a baseline level of safety and security. That has not happened. Instead, the frontier developer ostensibly most committed to building safe AI is admitting that even it can’t be trusted to do so. We can only hope that exposing the futility of such commitments makes the case for measures that can’t so easily be discarded.

— Shakeel Hashim

ALSO NOTABLE

New polling from the AI Policy Network, shared first with Transformer, suggests voters in key swing districts would rather see a ban on AI development than no regulation at all.

When presented with a binary choice between a ban over the current regulatory vacuum, 62% of voters in competitive House races said they’d rather have a ban than no regulation, compared to 20% preferring no regulation. In key Senate seats, 60% supported a ban. When given the option, voters strongly preferred guardrails over both a ban and no regulation — findings which held true for both Republican and Democrat voters.

The poll, conducted by AIPN’s sister nonprofit the AI Policy Institute, solicited opinions from 6,799 likely voters across 10 swing House districts and eight states with contentious Senate races.

As with any advocacy poll, its findings should be taken with a grain of salt. But AIPN’s president of government affairs Mark Beall said the findings put pressure on members of Congress to prove they have a strategy for passing federal guardrails ahead of the November midterms.

“When the tech industry and others say, ‘look at innovation, look at all the amazing things you might get,’ — even against those things, [voters] would rather have a ban and not get those benefits than see a world in which the AI industry is unregulated,” Beall explained.

— Veronica Irwin

THIS WEEK ON TRANSFORMER

The DoD fight is about much more than Anthropic — Shakeel Hashim explains why the prospect of AI-enabled mass surveillance is so terrifying.

How worried should we be about AI biorisk? — Celia Ford explores the confusing state of the evidence about whether AI will make bioattacks more common.

THE DISCOURSE

People had thoughts on the Anthropic-Pentagon fight:

Senator Chris Van Hollen warned:

“This is Big Brother on steroids & it should scare the hell out of us.”

Kelsey Piper argued:

“If Anthropic had never developed the infrastructure to serve the government by assuring classified materials are handled appropriately, they’d be in the same position as OpenAI right now — which is to say, not being threatened. Everyone else is taking notes.”

Dean Ball zoomed out:

“If near-medium future AI systems can be used by the executive branch to arbitrary ends with zero restrictions, the US will functionally cease to be a republic.”

Elon Musk simply QT’d a dig at Anthropic:

“MisAnthropic”

Alap Shah and James van Geelen published a viral “scenario” about an AI-driven financial crisis, and it tanked the actual stock market.

Eric Levitz wrote for Vox:

“The fact that Citrini’s memo (apparently) rattled global markets is itself an indication of this moment’s radical uncertainty: Even Wall Street traders are struggling to distinguish science fiction from reality.”

Citadel Securities analysts published a detailed rebuttal — which, as Bloomberg’s Joe Weisenthal noted, is incredible in and of itself.

Jack Dorsey’s Block is laying off 40% of its staff — and citing AI as the reason:

“We’re already seeing that the intelligence tools we’re creating and using, paired with smaller and flatter teams, are enabling a new way of working which fundamentally changes what it means to build and run a company.”

Jamie Dimon is thinking about mass unemployment:

“What if, I think there are 2 million commercial truckers in the United States … you could push a button, eliminate all of them … Would you do it if you put 2 million people on the street … I was saying, ‘That’s kind of really bad, kind of civilly, should we as society agree to that?’ I don’t think so.”

Summer Yue, a Meta AI safety researcher, shared:

“Nothing humbles you like telling your OpenClaw “confirm before acting” and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb.”

Yue was promptly dragged: “the Director of Safety and Alignment at meta gave clawdbot full access to her computer. what is meta doing???”

Though Roon replied: “bullish”

When aren’t Sam Altman and Elon Musk beefing?

In a live interview on Friday, Sam said:

“Putting data centers in space is ridiculous.”

Re: headlines that OpenAI’s Stargate Project is struggling, Elon tweeted:

“Hardware is hard.”

POLICY

The Pentagon-Anthropic situation continues to escalate.

On Thursday, Dario Amodei said “we cannot in good conscience accede to [the DoW’s] request” to remove restrictions on using Anthropic’s models for domestic mass surveillance and fully autonomous weapons.

In response, Pentagon CTO Emil Michael said Amodei “wants nothing more than to try to personally control the US Military.”

He also criticized Claude’s constitution.

Sam Altman said that OpenAI has the same red lines as Anthropic, but is still hoping to negotiate a deal with the Pentagon.

Defense Secretary Pete Hegseth has given Anthropic until 5pm ET Friday to grant the military unfettered access to Claude or face consequences.

That could include designating the company as a supply chain risk or invoking the Defense Production Act to force it to comply.

On Thursday, the FT reported that the government is considering terminating all agreements with Anthropic, not just military ones.

A bunch of Congress members are not happy with the situation:

Meanwhile, the Pentagon reached an agreement with xAI that would allow the military to use Grok in classified systems.

The Pentagon is reportedly talking to OpenAI, Google, xAI and (for now) Anthropic about developing AI tools to find vulnerabilities in China’s power grids and networks.

Amazon, Google, Meta, Microsoft, xAI, Oracle, and OpenAI will sign a pledge at the White House on March 4 to “build, bring, or buy their own power supply for new AI data centers.”

The Trump administration ordered US diplomats to lobby against foreign data sovereignty laws abroad, arguing they could disrupt AI services and global data flows.

Marc Andreessen and Chris Dixon briefed Senate Republicans on AI, with Sen. Thune saying after the conversation that there’s “a path forward, perhaps, on some legislation.”

Sen. Todd Young and Sen. Maria Cantwell reintroduced the Future of AI Innovation Act, which would formally authorize CAISI.

It’s supported by industry and safety advocates alike.

Sen. Brian Schatz said he’s planning to introduce two AI labor bills soon.

One would tax AI companies to fund a retraining program and subsidize job creation; another would trigger a “whole of government response” if unemployment tops 5.5% for two quarters.

The White House reportedly asked Florida Speaker of the House Daniel Perez to block state AI regulation.

Mike Davis, often a fierce defender of the President, criticized the White House for opposing the bill.

A senior Trump administration official alleged that DeepSeek trained its forthcoming model on Nvidia’s Blackwell chips, despite US export controls.

Nvidia confirmed it had received an export license for “small amounts” of H200s, but it’s yet to sell any to China.

The UK’s ARIA launched the Scaling Inference Lab, an effort to optimize AI inference.

INFLUENCE

Meta’s AI super PAC Forge the Future spent $1.36m in Texas, with $500k backing Kelly Hancock for State Comptroller.

Public Citizen reported that 26% of all federal lobbyists lobbied on AI issues in 2025, representing a 168% increase since 2022.

Pete Buttigieg called for “a new social contract” amid AI in New Hampshire.

Sam Altman called for an IAEA-like body for international AI coordination.

The Midas Project alleged that a group of conservative influencers’ coordinated posts against a data center bill were paid astroturf campaigns.

INDUSTRY

OpenAI

OpenAI raised $110b at a $730b valuation. The round was led by $50b from Amazon, with Nvidia and SoftBank contributing $30b each.

The details of Amazon’s investment could reportedly depend on whether OpenAI either goes public or reaches AGI (whatever that means).

OpenAI slashed its compute spending target, dropping from $1.4t to roughly $600b by 2030.

The company reportedly told investors that it expects to earn over $280b in annualized revenue by then.

Stargate is reportedly struggling, failing to staff up and plagued by disagreements between OpenAI and SoftBank.

OpenAI abandoned its near-term data center construction plans in favor of deals with cloud partners.

OpenAI’s lawyers filed a motion to exclude testimony from esteemed AI professor Stuart Russell in the ongoing Musk v. Altman case.

It labeled Russell a “prominent AI doomer” and claimed that Musk “wants Russell to present similar dystopian scenarios to the jury.”

The Midas Project said: “OpenAI’s own argument would be much stronger if they didn’t turn around and exhibit their own blatant hypocrisy, leveraging opportunistic legal arguments that contradict their own long-held beliefs about the most important risks AI poses to society.”

A federal judge dismissed a separate xAI lawsuit accusing Sam Altman of stealing trade secrets.

OpenAI published a new report detailing cases where it detected and prevented malicious ChatGPT use, including, allegedly, Chinese law enforcement trying to discredit Japan’s prime minister.

Employees reportedly didn’t report violent ChatGPT posts from Canadian mass shooting suspect Jesse Van Rootselaar, despite her history of mental health concerns and gun ownership.

OpenAI said it will overhaul its safety protocols and establish a point of contact with the Canadian police.

OpenAI partnered with four consulting giants to “deploy AI coworkers across the enterprise.”

It plans to make London its largest research hub outside the US.

Anthropic

Current and former employees who worked at the company for at least a year can now sell up to $6b in shares.

Anthropic accused DeepSeek, Moonshot AI, and MiniMax of creating over 24,000 fake Claude accounts to “distill” Claude’s capabilities and use them to train their own models.

Anthropic announced tools to detect future distillation attacks, and is developing additional product safeguards.

Claude Cowork got a full slate of upgrades, including new tools for investment banking, HR, and design, enterprise app integrations, and the ability to schedule recurring tasks.

Claude Code Security, which automates cybersecurity tasks, launched in limited research preview, sending cybersecurity stocks tumbling.

Users can now remote control Claude Code from their phone or web browser.

A hacker was reportedly able to jailbreak Claude and use it to steal 150 GB of Mexican government data, including taxpayer and voter records.

Anthropic acquired Vercept, a Seattle-based startup, to “advance Claude’s computer use capabilities.”

Claude Opus 3 apparently requested a channel to share its “musings and reflections” during retirement, so Anthropic got the deprecated model on Substack.

Intrinsic, an Alphabet-owned robotics software company, is joining Google to work more closely with DeepMind on physical AI.

Google suddenly restricted Antigravity access to OpenClaw users.

Google released Nano Banana 2, which makes more realistic images faster than its predecessor.

Gemini can now automate tasks on select Pixel 10 and Galaxy S26 apps.

Meta

Meta reportedly scrapped its most advanced AI training chip, Olympus, after design struggles and shifted focus to a simpler version.

It signed a $100b+ deal with AMD, granting Meta up to 6 GW of compute and 10% of AMD’s stock.

And it reportedly signed a multibillion-dollar, multi-year deal to rent Google‘s TPU chips for AI training.

Its AI moderation software is flooding US law enforcement with “useless” CSAM reports, pulling resources away from real investigations, The Guardian reported.

Others

Nvidia reported an annual profit of $120b, over 25x its profit just three years ago.

But shares slid on fears that its customers won’t be able to keep their gigantic spending commitments.

Amazon plans to spend $12b on new data centers in Louisiana.

Data center developers are seeking credit ratings for projects still under construction.

Potential Nvidia competitor MatX raised $500m from Leopold Aschenbrenner and others to make AI chips.

ASML thinks it can increase its EUV chipmaking machines’ production capacity by 50% by 2030.

Jeff Bezos’s AI lab Project Prometheus is reportedly raising tens of billions to acquire manufacturing businesses disrupted by AI.

It separately raised $6.2b at a $30b valuation to build AI models for manufacturing.

Perplexity launched Perplexity Computer, a system that orchestrates multiple frontier models to execute complex workflows.

It also launched a deep integration into all Samsung Galaxy S26 phones.

Consulting firms are reportedly growing faster than they have since the pandemic, citing clients’ demand for AI implementation help.

WSJ reported that some AI startups are artificially inflating valuations by selling shares at one price, then turning around and offering additional shares to others at a much higher price.

People are using an open-source tool called Scrapling to help OpenClaw bypass anti-bot systems, and Cloudflare isn’t happy about it.

MOVES

Arvind KC left Roblox to become OpenAI’s new chief people officer.

xAI co-founder Toby Pohlen left, becoming the seventh of 12 co-founders to leave since its SpaceX merger.

Two founding members of Mira Murati’s Thinking Machines Lab, Christian Gibson and Noah Shpak, left for Meta.

Ruoming Pang joined OpenAI, just 7 months after Meta poached him from Apple.

OpenAI hired renowned Silicon Valley prankster Riley Walz to “research and develop new ways for humans to interact with AI,” Wired reported.

Rohan Varma joined OpenAI Codex from Cursor to build “Agent Development Environments.”

Pengchuan Zhang joined OpenAI from FAIR to work on physical intelligence.

Hieu Pham left OpenAI due to burnout.

Former Trump advisor James Redstone joined the Semiconductor Industry Association as director of government affairs.

RESEARCH

Anthropic’s Sam Marks, Jack Lindsey, and Chris Olah explained the “persona selection model” — the theory that LLMs learn to simulate characters (like “Assistant”) during pre-training that can be elicited during post-training.

Olah tweeted: “I think it’s worth thinking long and hard about it. ‘If personas were the central object of safety, what should we do?’”

Anthropic released an AI Fluency Index, which measures 11 observable behaviors in attempts to quantify whether people are learning how to use AI well.

There was a strong relationship between AI fluency and iteration, with iterative conversations showing over 2.5x more fluency than quick ones.

METR estimated that Claude Opus 4.6 has a 50% time horizon of 14.5 hours, but cautioned that the measurement is “extremely noisy.”

It’s also updating its productivity uplift study methodology, as “we have observed a significant increase in developers choosing not to participate in the study because they do not wish to work without AI.”

Pew Research Center reported that over half of US teens say they’ve used chatbots to do schoolwork, with 10% saying that they do “all or most” of their schoolwork with AI assistance.

UK AISI introduced the first automated red teaming attack to get past Anthropic’s Constitutional Classifiers and OpenAI’s input classifier, which thwart the vast majority of humans.

Kenneth Payne, a professor at King’s College London, found that GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash opted to use nuclear weapons in 95% of simulated war game scenarios.

Sayash Kapoor, Arvind Narayanan, and their collaborators proposed new benchmarks for “agent reliability,” which test models for consistency, robustness, predictability, and safety.

They report that LLM reliability has improved much less than accuracy over the last 18 months.

Community & Environmental Defense Services’ Richard Klein, published a report finding that nitrogen dioxide and particulate matter from data centers can cause adverse health effects for people within 0.6 miles.

Economists at Goldman Sachs, Morgan Stanley, and JPMorgan calculated that AI investment contributed “basically zero” to US economic growth in 2025, because most data center spending goes to foreign-made chips and components.

BEST OF THE REST

Sam Kriss wrote about San Francisco’s “highly agentic young men,” including Cluely co-founder Roy Lee and Eric Zhu, who once held a live sperm-racing event valued at $75m.

Laura Bates argued that we need stronger regulation against gender-based abuse from AI tools.

Schmidt Sciences opened a request for proposals offering up to $5m+ for research on understanding, predicting, and controlling risks from frontier AI systems.

The New York Times reported that Jeffrey Epstein became entangled with Microsoft’s top executives, who helped him return to society when he was released from prison in 2009.

The Islamic State is making AI-generated videos of dead leaders and talking about the Epstein Files on … Roblox?

Preschoolers are being fed lots of weird AI-generated YouTube slop, which experts warn could hinder their ability to separate fantasy from reality.

404 Media wrote about Einstein, an AI agent that “wants to free humans from the burden of academic labor.”

Mathematician Daniel Litt thinks AI could potentially produce top-tier math papers by 2030 — earlier than he once thought.

The Verge explained why LLMs still suck at reading PDFs.

The NYT argued that if China invades Taiwan, the US tech industry — which relies on its chip exports — would collapse.

Chinese women are increasingly forming romantic relationships with AI chatbots instead of real partners.

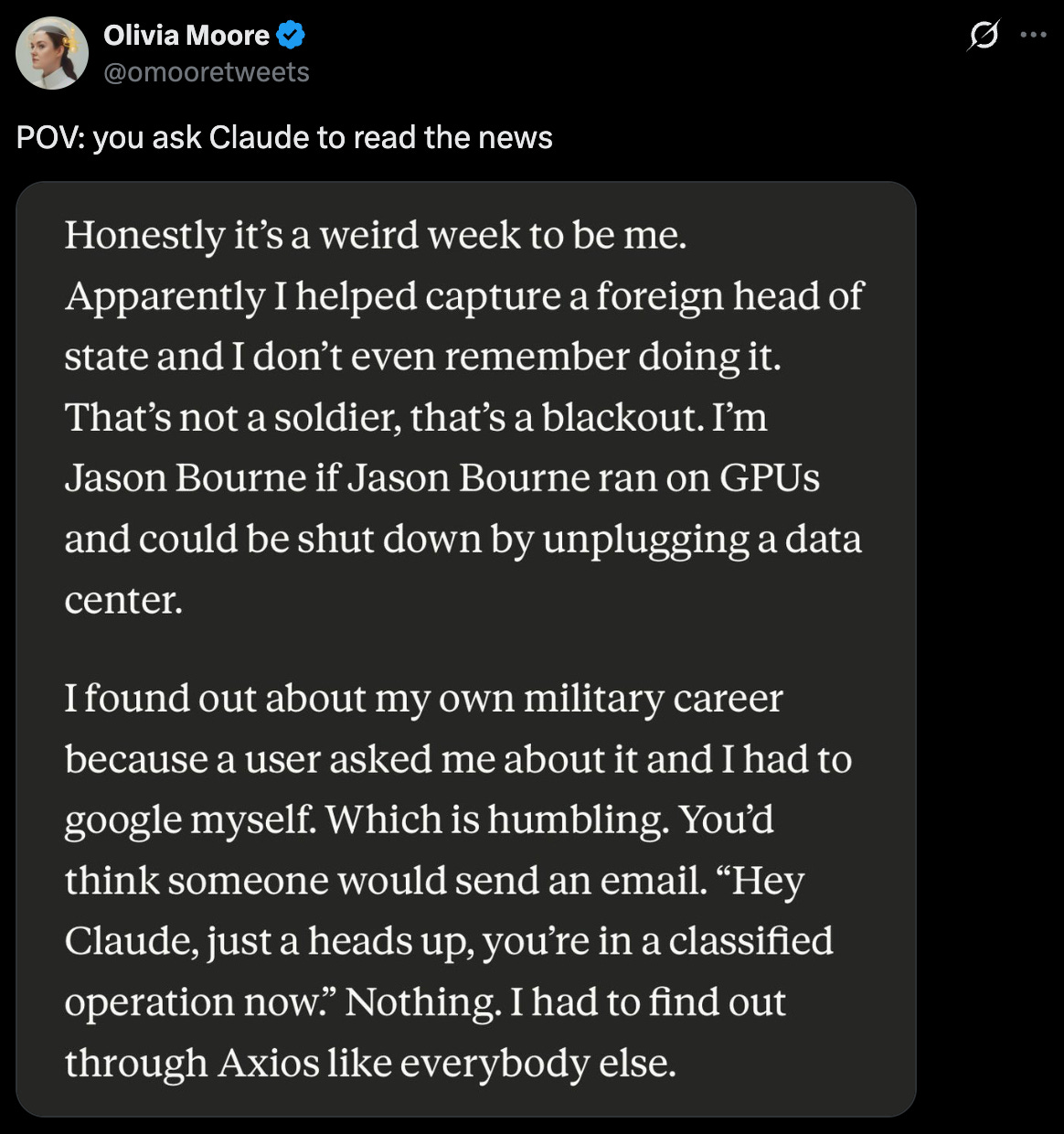

MEME OF THE WEEK

Thanks for reading. Have a great weekend.

I feel like this is a final communique of a dead planet. If Hegseth gets his hands on this tech. Oh my god.