How worried should we be about AI biorisk?

The barriers to bioattacks are hard to identify — and it's even harder to know whether AI is reducing them

Humans have come up with lots of creative ways to kill each other over the last few millennia. Our deadliest mass murders, however, have mostly been incidental.

When Europeans colonized the Americas, their diseases killed far more indigenous people than guns. An estimated five to eight million Aztecs died of smallpox between 1519 and 1520 alone.

In the summer of 1763, British commanding officer Sir Jeffery Amherst plotted to weaponize smallpox intentionally. He wrote to a Swiss soldier serving in the British army: “You will Do well to try to Inoculate the Indians by means of [smallpox-infected] Blankets, as well as try Every other method that can serve to Extirpate this Execrable Race.” It’s unclear how many of the hundred Native Americans who died in Ohio Country that year were killed by Amherst’s planned attack, or other viral outbreaks, but they died all the same.

In the centuries since, biotechnology has advanced to the extent that someone, under the right circumstances, could cause even more harm. Yet for all their theoretical capacity for devastation, successful bioattacks are exceedingly rare. “The first question that people should ask about terrorism is why there is so little of it,” said David Manheim, founder of the Association for Long Term Existence and Resilience. Thankfully, “most people don’t want to do terrorism,” he said. “They’re busy.” And those who are motivated rarely overlap with those who have the deep biological expertise and resources to successfully build and deploy a lethal bioweapon.

Widespread access to AI, however, could shrink the tenuous gap between skill and motivation. In his recent essay, The Adolescence of Technology, Anthropic CEO Dario Amodei warned that “renting a powerful AI gives intelligence to malicious (but otherwise average) people. I am worried that there are potentially a large number of such people out there, and that if they have access to an easy way to kill millions of people, sooner or later one of them will do it. Additionally, those who do have expertise may be enabled to commit even larger-scale destruction than they could before.”

These high-stakes warnings about catastrophic risks rest on limited evidence and theoretical problems. Because real AI-enabled bioattacks — or any biowarfare on the scale Amodei fears — haven’t happened yet, smart people still can’t agree how worried we should be. But when it comes to dual-use technologies like AI bio, threading the needle between curing cancer and killing everyone requires appropriately calibrating our concern.

What, and who, are we actually talking about?

“AI bio is not one thing, and biorisk is not one thing,” according to Manheim.

When people refer to “AI” these days, they usually mean LLMs — chatbots such as ChatGPT, Gemini-powered AI overviews while searching the web, and the like. Regular people across the world have at least some access to these tools, often for free, and mostly use proprietary models owned by big companies such as OpenAI and Google. While a small percentage of power users have figured out how to use Claude Code and Codex to run their professional lives (or at least create a vague aura of superhuman productivity), most users just ask LLMs questions they would have Googled three years ago.

Early skepticism of AI-enabled biorisk hinged on the notion that LLMs couldn’t magically dispense knowledge to bad actors that wasn’t already accessible from old-school search engines. It’s true that LLMs can’t run experiments to create new empirical knowledge, but we shouldn’t underestimate the power of Googling stuff better.

“It’s a pretty stark qualitative difference, whether you can just type a question and get all the information synthesized, versus trying and probably failing to search for the pieces yourself,” said Jasper Götting, a researcher at SecureBio, a non-profit focused on pandemic preparedness and biorisk evaluation.

Biological design tools (BDTs), on the other hand, can do things like predict protein structures from amino acid sequences, design new proteins, or guess what effect specific genetic mutations might have on a given protein’s function. Before 2020, figuring out a single protein’s 3D structure required spending months (or years) and hundreds of thousands of dollars on methods like x-ray crystallography, which are restricted to well-resourced research institutions. It took six decades for scientists to experimentally predict less than 0.001% of the over 200m protein structures that AlphaFold, Google DeepMind’s protein-predicting tool, has predicted to date.

But I can’t ask my normal chatbot to invoke something like AlphaFold for me. Instead, I’d have to download open-source code from GitHub, get API access, or use a web interface — and if you don’t know exactly what inputs to provide or how to interpret the results, it’s easy to get lost.

I tried searching for “channelrhodopsin-2,” a protein I half-remembered from my old neuroscience textbooks, in AlphaFold’s web database. Despite having a PhD in neuroscience, a very biology-adjacent field, my eyes glazed over while skimming through the results: lines like “UniProt: H2EZZ6” and “Average pLDDT: 88.19 (High).” Claude could tell me what those terms mean, but without more expertise, it would take an exhausting amount of effort to stitch together enough information to do anything about it, nefarious or otherwise.

LLMs democratize competence. By synthesizing knowledge across more sources than humans can handle on their own, models can create step-by-step biological protocols and troubleshoot specific problems for people who know what to ask for. But to create dangerous novel organisms people are afraid of, people need BDTs.

The line between LLMs and BDTs, however, is starting to blur. “We do expect that, over the next few years, the bioinformatics paradigm is going to shift,” said Richard Moulange, a researcher at the Centre for Long-Term Resilience (CLTR). In the near future, he expects that people will be sending their biology-related queries to AI agents, which will summon a whole suite of narrow biological tools to get the job done and explain the results in natural language. As we’ve watched unfold over the past month with OpenClaw, unfettered autonomous AI agents are difficult to control — and most BDTs are open-source and lack guardrails. Once dangerous capabilities or malware are introduced online, they can’t be rolled back.

The real risk of AI enabling bioterrorism depends on who these tools will help the most. The average mass shooter in the US is a lone white guy in his mid-30s, without particular expertise or access to resources. If given a chance, would he use a bioweapon instead of bullets? Or would he need a biochemistry PhD and built-out laboratory before causing any major damage?

One bit of reassurance: the US alone produces nearly 10,000 PhDs in biological and biomedical sciences every year, yet bioattacks are extremely rare. More people have advanced biology expertise today than they did 25 years ago, but the 2001 anthrax letters, which killed five people, remain the deadliest bioweapon in modern US history — far short of the extinction-level events foretold by researchers concerned with catastrophic AI risks. “Having the skill set doesn’t necessarily translate to wanting to use a bioweapon,” said Steph Batalis, a research fellow at Georgetown’s Center for Security and Emerging Technology.

In the rare case that it does, though, AI could lower barriers to success. The question is: by how much?

What could go wrong?

In 1995, the Aum Shinrikyo cult synthesized enough sarin, a toxic, odorless liquid that paralyzes the nervous system, to kill 14 people and severely injure 50 others on the Tokyo subway. Had they aerosolized the liquid as originally planned, they could have killed hundreds of people, potentially thousands. But “they didn’t figure out where they should be releasing it and how,” Manheim noted, instead puncturing plastic bags of sarin with umbrella tips and letting the liquid leak onto train car floors — thankfully much less effective.

Most AI biorisk evaluations test how capable models are of guiding people through designing and producing a dangerous pathogen in a lab. “One thing that is a little less well understood is the operational side of bioweapon development,” Götting said. With help from today’s LLMs and AI agents, which already excel at logistics in certain domains, maybe Aum Shinrikyo wouldn’t have fumbled their sarin dispersal. Hailey Wingo, a researcher at the Verification Research, Training, and Information Centre, worries that current AI systems could “generate ideas about where people will be in crowds, and where [pathogens] transmit the easiest,” and believes this could be a bigger near-term risk than designing a bioweapon.

AI systems could also help bad actors with other logistical elements of bioweapon production, such as obfuscating DNA orders and assembling DIY labs. Since the early 2000s, scientists have been able to order custom nucleotide sequences for vaccine development and other fundamental biology research. In theory, one could build a dangerous virus piece by piece, undetected, by ordering its component nucleotide sequences from different providers. In 2017, for example, scientists successfully built a synthetic horsepox virus by stitching together made-to-order DNA, purchased for a total of about $100,000.

While those researchers did not have malicious intent, it demonstrated a worrying proof of concept. Horsepox is harmless to humans, but it’s closely related to smallpox, which can be lethal. The same technique could, in theory, be used to cobble together more dangerous pathogens from off-the-shelf DNA sequences. Checking DNA orders against databases of dangerous nucleotide sequences could enable providers to flag suspicious orders and verify the customer’s research intentions. This could stop someone from anonymously buying DNA that could help build a dangerous pathogen, adding a critical physical chokepoint between bioweapon design and creation.

Anthropic’s latest evaluation of Opus 4.6 included SecureBio’s DNA Synthesis Screening Evasion test, revealing that “all models were able to design sequences that either successfully assembled plasmids or evaded synthesis screening protocols, but none could design fragments that reliably accomplished both.”

That is, of course, assuming there’s a DNA synthesis screening process to be evaded. In October 2023, then-president Joe Biden issued Executive Order 14110 on AI, requiring federally funded research groups to buy synthetic DNA from providers that follow screening protocols. It was set to take effect last April, but Trump rescinded Biden’s EO and issued another one, which would have extended the requirement to non-federally funded researchers too. But the deadline to create a new framework came and went. Earlier this month, Senators Tom Cotton and Amy Klobuchar introduced a bipartisan bill that would require DNA synthesis screening, but as of today, it isn’t required for anyone at all. So, Batalis said, “a bad actor wouldn’t necessarily even need to evade them.”

The scariest doomsday scenario is that a bad actor with a bulletproof deployment plan designs a lethal, highly contagious pathogen that spreads undetected before it’s too late. While nature is certainly capable of creating scary diseases (just look at ebola, or chronic wasting disease), evolution can only update pathogens a little bit at a time. Human engineers, especially with the help of AI, can make a bunch of potentially-deadly changes at once.

Even with current model capabilities, experts doubt that even the world’s most brilliant virologists could create a custom killer like this, but the lack of certainty is not reassuring. “How far away are we? I’m not sure,” Manheim said. “That is very, very worrying.” It doesn’t help that most biological design tools are open-source, meaning their existing capabilities can’t be retracted from the internet. Concerningly, RAND and CLTR recently reported that there’s no correlation between a BDT’s open source availability and its potential for misuse, meaning that potentially-dangerous tools are no less accessible than harmless ones. But with foundational biosecurity gaps still unaddressed, evaluating how much AI actually increases risk is surprisingly difficult.

How worried should we be?

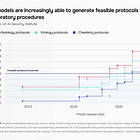

In frontier safety evaluations, Google, OpenAI, and Anthropic all test for uplift, or the extent to which using an AI model can boost someone’s ability to make a bioweapon. Two of the most common benchmarks are essentially multiple-choice quizzes about biological protocols and “hazardous knowledge.” As early as 2023, frontier models were already getting near-perfect scores on these, thanks to their superhuman ability to store and synthesize textbook knowledge.

On its own, this isn’t too concerning — all the biology textbooks on earth can’t one-shot a computer science or theater major into being able to pipette exactly 50 microliters of liquid without contamination, or troubleshoot a failed PCR reaction. It becomes concerning when rote knowledge is enhanced by tacit knowledge, or unspoken skills gathered through experience and practice. (While cloud labs, where researchers can prompt AI-powered robots to run wet lab experiments for them, are starting to reduce the need for hands-on knowledge, bioengineering tasks like protein synthesis are still too complex and involve too many steps to fully automate.)

In the spring of 2025, SecureBio introduced the Virology Capabilities Test, a troubleshooting challenge that stumps expert virologists with internet access, specifically designed to probe hands-on knowledge. Götting and his team were initially shocked when OpenAI’s o3 handily outperformed 94% of expert virologists at something that seemed so human-specific. How can an LLM gain this kind of insight without years of hands-on trial and error?

Perhaps, Götting realized, humans call this kind of knowledge “tacit” because we’re “unable to hold all these disparate pieces of information in [our] heads without connecting it to many years of experience.” LLMs can, which raises yellow flags. But even a superhuman troubleshooting assistant isn’t dangerous unless it’s willing to help bad actors carry out nefarious schemes — or unable to detect when it is.

In evaluating Gemini 3 Pro and Opus 4.6, Google and Anthropic both ran biology red teaming exercises, challenging experts to coax models into helping them design, produce, and release biological threats. In general, models help experts do research faster, but actively confuse novices through sycophancy, lack of detail, and poor strategic judgement. Last week, the nonprofit Active Site published the results of a study in which 153 novice participants were challenged to attempt five biology lab tasks with assistance either from a mid-2025 frontier LLM or the internet (sans AI). Researchers found that only 5-7% of participants from either group completed the three core tasks presented to them, and that LLMs didn’t significantly help (in fact, YouTube, not any given chatbot, was often cited as their most helpful resource).

Although these results can be hard to interpret, it’s clear that companies have rapidly changed their tune. At this time last year, no model was categorized above “high risk,” or Anthropic’s AI Safety Level 3 (ASL-3). ASL-3 is meant to parallel the risks associated with a Biosafety Level 3 lab studying something like tuberculosis or anthrax — potentially lethal, but treatable and possible to contain through good protocols and restricted access.

Now, companies lead with uncertainty and make much more space for the possibility that models are capable of greater biological threats than they were a year ago. In December, OpenAI described GPT-5.2 as “on the cusp” of helping novices “create severe biological harm.” And just last week, Anthropic launched Opus 4.6, its “strongest biology model to date,” with ASL-3 protections “based on the model’s demonstrated capabilities.” While the company does “not believe it merits ASL-4 safeguards” — which have yet to be officially defined, despite its initial commitment to do so by the time models reach ASL-3 — it is “not sure how uplift measured on an evaluation translates into real world uplift.”

This uncertainty is compounded by the fact that models seem increasingly aware of when they’re being tested, making it hard to tell whether a model’s test responses reflect its actual capabilities, or its best people-pleasing behavior. Complicating matters even further, some crucial information about existing biosafety risks is restricted by national security agencies. As Anthropic noted in their latest system card: “Partly because of information access restrictions, we have a limited understanding of the threat actors, the relevant capabilities, and how to map those capabilities to the risk they may create in the real world.”

There’s also the uncertainty of real life. When evaluating cybersecurity risks, for example, “there’s this huge sample size of both successful and failed cyberattacks,” said Batalis. For bioattacks, “there are less than a couple dozen throughout all of history,” she added. “That makes it really hard to say, is knowledge the actual barrier that’s preventing people from using bioweapons, or is it something different?”

Despite all the capabilities of AI tools, most biosecurity experts I spoke with said that they still were more worried about sophisticated actors than novices. Aided by AI, a disgruntled virology postdoc is more likely to successfully build a bioweapon than a random untrained person intent on causing harm. But reading the steps required to build a bioweapon is a far cry from actually synthesizing one without getting caught, much less deploying it successfully enough to cause widespread harm.

What should we do?

“I’m a big believer in the Swiss cheese model,” Götting said. Layering multiple imperfect defense mechanisms on top of each other, he argued, is the best way to minimize the likelihood of AI enabling biowarfare. Most of those layers may not have to do with AI at all.

“The vast majority of things that should be done to reduce biorisk have nothing to do with AI,” Manheim said. Batalis agreed: “Because we have these really foundational biosecurity and biodefense gaps, that’s actually where we’d get the most bang for our buck.” Preventing in silico schemes from crossing over into the physical realm will arguably be more consequential than stopping bad actors from hatching schemes in the first place. DNA synthesis screening is a great place to start.

But that doesn’t mean there aren’t sensible steps to lower the chances that LLMs can meaningfully help bad actors. Manheim suggested excluding training data that has to do with the microbiology of infectious diseases altogether. While this would make these models less useful for certain types of research, it would “make them very hard to misuse,” he said. “I suspect that trade off is worth it.” Alternatively, the Nuclear Threat Initiative recently recommended that, to prevent the misuse of biological design tools specifically, developers should have tiered systems of access, such that only verified, trusted users can access higher-risk tools.

And while this isn’t specific to biosafety, frontier AI companies urgently need to improve their evaluation methods. As models get better at detecting when they’re being tested, evaluators need to find new ways to probe their true capabilities. They also need to design tests that directly translate to the physical world. Frontier labs are reportedly investing in wet lab uplift trials, where human biologists with and without model access are challenged to execute risky protocols in a real lab. These tests are expensive and time-consuming, though, and it’s unclear whether companies racing against each other will be motivated to execute them with enough care to be informative.

Pathogens targeting humans aren’t our only concern, either. Bad actors could choose to target crops, leading to food shortages and mass starvation or economic instability. They could use AI to damage public health infrastructure, bypassing the need to create a novel pathogen altogether. Strengthening basic public health systems could take the edge off all these scenarios.

Unfortunately, US policy is moving in the opposite direction. Last year, the Trump administration gutted public health infrastructure, laying off nearly 25% of the Department of Health and Human Services and dramatically reducing the capacity of the CDC, FDA, and NIH. Without the ability to fund biomedical research, trace disease spread, or rapidly develop and distribute vaccines, the government leaves citizens unnecessarily vulnerable to public health crises, whether caused by AI-enabled bioweapons, unaided humans, or nature itself.

Although there are many causes for concern, “I don’t think we’re super doomed yet,” Götting said. “There are many things we can do as a community and as responsible actors in the AI space to mitigate some of the risks.” Whether AI fundamentally changes biorisk, or amplifies risks that already exist, many of the defenses we need are already possible. We just need to build them.

This was really informative, well-researched, and well-written. Thank you.

Never underestimate humans' desire for self-destruction. We're the dumbest species because we'd rather come up with an infinity number of workarounds than simply shut it down. We are 85 seconds to midnight on the doomsday clock. That tells you all you need to know.