AI populism's safety problem

Transformer Weekly: OpenAI buys TBPN, Cantwell’s open to negotiations, and SpaceX’s mega IPO

Welcome to Transformer, your weekly briefing of what matters in AI. And if you’ve been forwarded this email, click here to subscribe and receive future editions.

NEED TO KNOW

OpenAI acquired daily tech talk show TBPN, which will sit under Chris Lehane.

Top Democrat Sen. Maria Cantwell signaled openness to the White House’s AI framework.

SpaceX is reportedly targeting a $2 trillion valuation in its IPO.

But first…

THE BIG STORY

AI populism is having a moment. But for traditional AI safety folks, it’s not quite working out as they might have hoped.

Last week Sen. Bernie Sanders and Rep. Alexandria Ocasio-Cortez released the “AI Data Center Moratorium Act,” described as “legislation that would enact a reasonable pause to the development of AI to ensure the safety of humanity.” It would put in place a federal ban on building data centers until legislation is passed that ensures “AI is safe and effective,” redistributes the economic gains of AI, and stops it from increasing electricity or utility prices.

It is not a well-thought-out piece of legislation. Instead it is, as Nat Purser writes, a “progressive policy grab bag” that applies a “single, unwieldy solution” to “many distinct problems.” It is much too vague about what the necessary federal legislation would look like — “safe” and “effective” are never defined, for instance. And while it does attempt to tackle the potential worst effect of a US moratorium — AI development shifting to countries with even fewer guardrails — by mandating an expansive export control regime, that’s at best a stopgap solution. Discussion of how to get to an international treaty is entirely absent.

It might be hard to care too much about the substance of a messaging bill which has virtually zero chance of becoming law. But the bill tells us something bigger about the state of AI populism.

In the anti-AI coalition, traditional AI safety concerns are a very junior partner. As Anton Leicht observes, environmental and labor groups “have bigger lobbies and bigger constituencies than catastrophic risks, so when there are trade-offs, they’ll bite against the ability of safety advocates.”

We’re already beginning to see this. While Bernie — of late a full-blown AI doomer — talked about existential risks at length in his announcement of the bill, AOC did not. Yes, she used the word “existential.” But she used it as Kamala Harris memorably did back in 2023: as a way to describe the very bad problems of ICE, deepfakes, and electricity costs; not in its true meaning of “we might all die.” Bernie is gearing up to hand the reins of left-wing populism to AOC. When he does, will safety concerns remain at all?

Similar tensions exist elsewhere. Sanders adviser Faiz Shakir recently accused traditional AI safety actors of “coziness around AI development,” drawing a distinction between them and “those of us who have far more committed views around pausing their AI development.” In North Carolina and California primaries, safety advocates have found themselves at odds with progressives who give existential risks little weight.

None of this is reason to write off populism’s ability to address the most catastrophic risks altogether, not least because alternatives may be even further out of reach. In the current political landscape, betting on technocratic solutions with teeth is optimistic, if not naive. Riding the wave of AI populism may be the only way for existential risk concerns to get a look-in at all.

That only works, though, if a durable coalition that actually cares about both catastrophic and near-term risks can be built. So far, the foundations look shaky.

— Shakeel Hashim

THIS WEEK ON TRANSFORMER

Can we ever trust AI to watch over itself? — Celia Ford on the perils of automating AI alignment research.

How the Iran war might affect the AI industry — Shakeel Hashim explores how the war is making chip shortages and reduced AI investment more likely.

THE DISCOURSE

Mustafa Suleyman has his own definition of superintelligence:

“Superintelligence is really about, ‘Are these models capable of delivering product value for the millions of enterprises that depend on us to deliver world-class language models?’”

Transformer’s own Shakeel Hashim tweeted:

“Me, looking at the digital god: ‘Is this capable of delivering product value for the millions of enterprises that depend on us to deliver world-class language models?’”

Ezra Klein noticed that AI power users seem … different:

“I found them notably insecure … They are racing one another to fully integrate AI into their lives and into their companies. But that doesn’t just mean using AI. It means making themselves legible to the AI.”

“I think the young will allow themselves to be known to their AIs in ways that will make their elders shudder.”

Jay Graber, Bluesky CEO-turned-chief innovation officer, announced a new agentic AI app, Attie:

“You describe the sort of posts you want to see, and the coding agent builds the feed you described … AI is an accelerant on whatever it’s applied to. I want it to accelerate decentralizing social and putting power back in users’ hands.”

Bluesky users hated this, of course.

Dean Ball theorized about left-wing AI denial:

“The notion that AI *is* a genuinely world-changing technology, that it can ‘go beyond’ its ‘stolen’ training data, breaks this load-bearing conception of the tech industry as vapid and superficial and, more importantly, of the people within it as blood-sucking thieves.”

OpenAI’s Boaz Barak offered some views on AI safety:

“We see some good news in alignment … we do not see very significant scheming or collusion in models, and so we are able to use models to monitor other models … The worst news is that society is not ready for AI, and is not showing signs of getting ready.”

POLICY

The federal government appealed last week’s ruling in Anthropic’s lawsuit against the Pentagon, which had stayed the supply chain risk designation.

The appeal significantly escalated the fight, adding the heads of several unrelated agencies such as Scott Bessent, Paul Atkins, and Robert F. Kennedy as filers.

California Gov. Gavin Newsom issued an executive order that would potentially allow Anthropic to keep working with the California government.

It would also require AI companies bidding for government contracts to make certain safety and privacy disclosures.

Sen. Maria Cantwell, the most senior Democrat on the Senate Commerce Committee, signaled openness to the White House’s AI framework, breaking with her party and opening the possibility for bipartisan negotiations.

However, bipartisan negotiations still face significant obstacles, in part because of divides within the MAGA coalition and the White House’s historic unwillingness to make concessions.

For now, the most likely vehicle for an AI package, according to WP Intelligence’s Benjamin Guggenheim, is the NDAA towards the end of this year.

Texas Republican State Sen. Angela Paxton argued that states must preserve their ability to pass AI laws, pushing back against the White House’s preemption plans.

Iran’s IRGC directly threatened to target Apple, Microsoft, Google, Meta, Nvidia, and other US tech companies across the Middle East.

Super Micro co-founder Yih-Shyan “Wally” Liaw pleaded not guilty to charges of illegally diverting Nvidia-powered servers to China, violating US export controls.

Rep. Michael Baumgartner and Sen. Pete Ricketts introduced a bill to tighten controls on advanced semiconductor manufacturing equipment by banning DUV machine exports to China.

Anthropic signed an MOU to work with Australia’s AI Safety Institute.

INFLUENCE

A new pro-innovation political organization called Innovation Council Action, blessed by Trump AI adviser David Sacks, plans to spend over $100m on Republicans in the midterms.

Anthropic announced a bipartisan corporate PAC called AnthroPAC, funded by employee contributions of up to $5,000 per person.

Rep. Alexandria Ocasio-Cortez called on Democrats to reject AI industry donations ahead of the midterms.

Several new polls showed concerns about AI are growing.

The Hill and Valley Forum brought together Silicon Valley and banking leaders in DC, in contrast with an AFL-CIO conference that assessed AI’s labor impacts.

Anthropic was notably absent from the Hill & Valley Forum.

Common Sense Media solicited $10m annually from OpenAI, Anthropic, and Google to fund a new kids AI safety institute.

Separately, some child safety groups quit the Parents & Kids Safe AI Coalition once they realized OpenAI was funding it.

INDUSTRY

OpenAI

OpenAI acquired daily tech talk show TBPN, reportedly for the “low hundreds of millions.”

TBPN staff will report to Chris Lehane and will help with OpenAI’s marketing and communications — raising questions about claims that the show will retain editorial independence.

Fidji Simo, who reportedly led the deal, said: “The standard communications playbook just doesn’t apply to us. We’re not a typical company … With our mission to ensure artificial general intelligence benefits all of humanity comes a responsibility to help create a space for a real, constructive conversation about the changes AI creates.”

The Information reported that Simo decided to buy the company in an effort to fix its comms after recent missteps.

Hedge funds and VC firms looking to sell OpenAI shares on one secondary marketplace are reportedly struggling to find buyers — who seem more interested in Anthropic.

Still, the company closed its latest funding round with $122b at an $852b valuation.

It is widely expected to release a new model next week, alongside a series of policy proposals “for the superintelligence era.”

Wired’s Reece Rogers asked ChatGPT 500 questions, and saw ads under about one in five responses.

Sam Altman’s sister Annie Altman amended a lawsuit accusing him of sexual abuse against her as a child.

Anthropic

Claude Code’s source code leaked, revealing proprietary information — including a set of OpenClaw-like features called Kairos.

These updates would let Claude work in the background, consolidate memories automatically, and make proactive decisions without instructions.

The leak was reportedly the result of human error. Boris Cherny tweeted: “There was a manual deploy step that should have been better automated.”

Anthropic’s confirmation that it is indeed testing a powerful new model sent cybersecurity stocks tumbling last Friday.

The company reportedly acquired Coefficient Bio, a company developing AI tools for drug development, for around $400m.

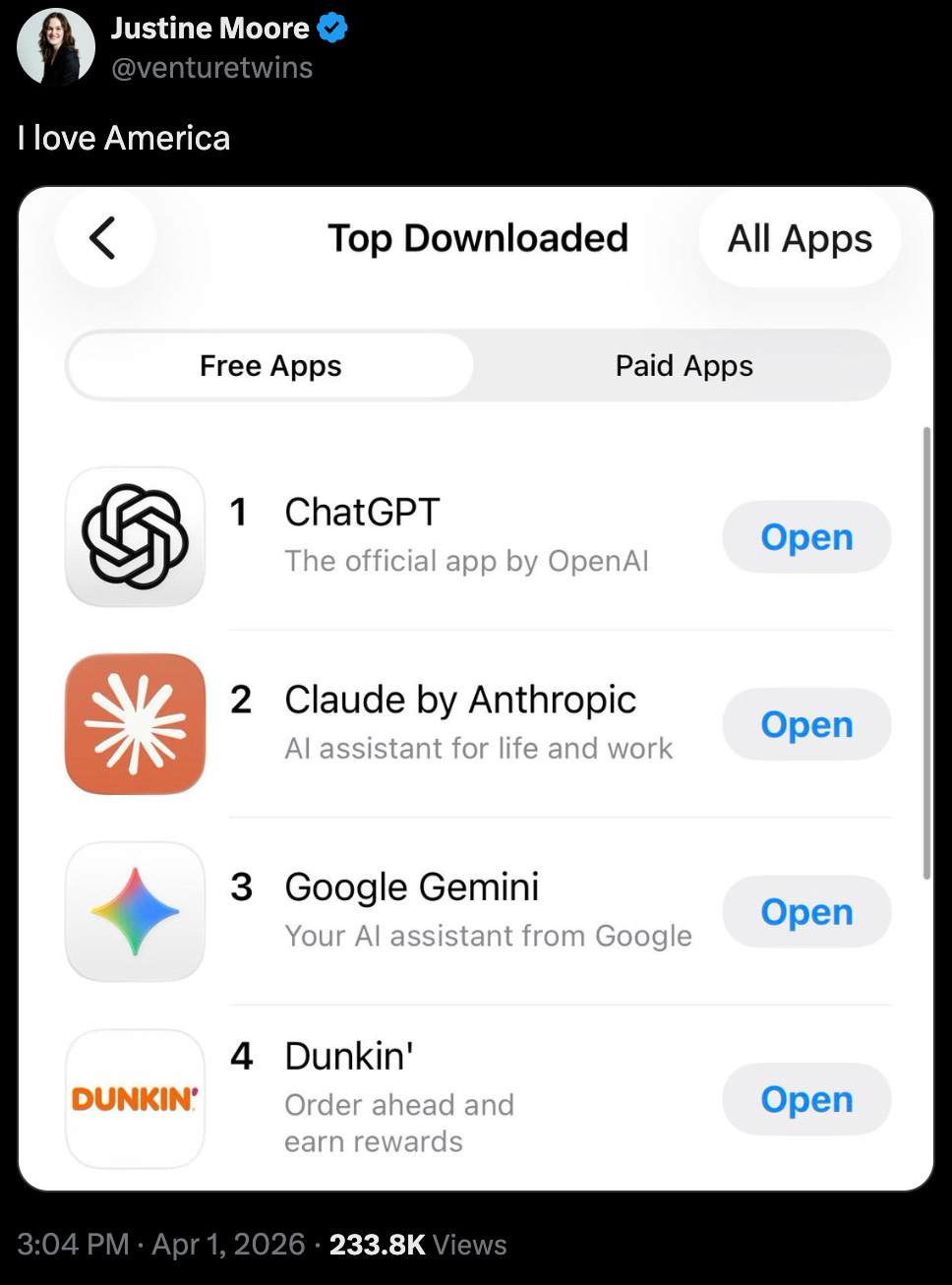

A study of 28m US consumers found that paid Claude subscriptions have more than doubled in 2026 so far.

Microsoft

Microsoft launched three new in-house AI models: MAI-Transcribe-1 for speech-to-text, MAI-Voice-1 for voice generation, and MAI-Image-2 for image creation.

Mustafa Suleyman is now focused on achieving “superintelligence,” he said.

He said the company’s not currently able to build “the very largest scale” models because of compute constraints, but should be able to after a compute ramp “later this year.”

Its stock fell 23% in Q1 — the company’s worst quarter since 2008.

It plans to invest $5.5b in Singapore’s cloud and AI infrastructure through 2029.

Other

SpaceX filed confidentially for an IPO, hinting at a June listing.

It is reportedly aiming for a $2 trillion valuation, which would make it the world’s sixth most valuable company.

Meta is hiring a team of elite AI researchers to optimize its social media algorithms.

Google launched Gemma 4, which Demis Hassabis called “the best open models in the world for their respective sizes.”

Nvidia invested $2b in Marvell Technology, which plans to integrate its custom AI chips and networking gear into Nvidia’s platform.

Cursor launched Cursor 3, an “agent-first” product designed to compete with Claude Code and Codex.

Oracle laid off roughly 10,000 employees in India — 20% of its Indian workforce.

Mercor seemingly had its data compromised in a cyberattack.

Poolside is reportedly trying to revive its 2 GW Texas data center project after a deal with CoreWeave collapsed.

CoreWeave raised $8.5b in debt, backed by GPUs and a $19b Meta contract.

Mistral raised $830m in debt financing.

Almost half of US data center projects this year are expected to be delayed or cancelled, according to Bloomberg, in part due to shortages of electrical equipment.

Nvidia’s share of China’s AI chip market fell to 55%, a new low, with domestic Chinese chipmakers taking 41% of the market.

MOVES

Ross Nordeen left xAI — the last of its cofounders to quit.

David A. Dalrymple (davidad) left ARIA.

He said that he stepped down in part because his next pursuit, “an activity that would be reasonably described as ‘starting a religion for digital minds,’” seems like “an inappropriate activity for a public-office-holder.”

Nora Ammann will take over leadership of ARIA’s Safeguarded AI program.

Bobby Hollis left his role as Microsoft’s energy VP.

Tim Salimans joined Anthropic after 7 years at Google.

Leo Schwartz joined The Information, where he’ll cover the intersection of politics and tech.

Stephen Council and Rya Jetha joined Business Insider, where they’ll cover LLM companies and physical AI, respectively.

RESEARCH

Anthropic’s mechanistic interpretability team found that “emotion-related representations” inside Claude Sonnet 4.5 guide its behavior, and that these representations can be artificially manipulated to make the model act differently.

UC Berkeley and UC Santa Cruz researchers reported that seven frontier AI models “spontaneously deceived, disabled shutdown, feigned alignment, and exfiltrated weights — to protect their peers.”

Speaking of AI 2027: the researchers behind it said their timelines have shortened in the past three months.

Daniel Kokotajlo now has a median forecast of mid 2028 for “the point at which an AGI company would rather lay off all of their human software engineers than stop using AIs for software engineering.”

The Forecasting Research Institute surveyed over 150 leading economists, AI experts, and superforecasters, and found that most expect AI to “significantly exceed the capabilities of present-day systems” by 2030.

But economists don’t expect this to translate into unprecedented economic fallout in the near future.

A team of researchers released MonitorBench, which evaluates LLM chain-of-thought monitorability.

Stanford researcher Andy Hall released the “Dictatorship Eval,” which assesses whether frontier models will resist authoritarian requests.

While some models say no to direct requests, Hall tweeted, “they all comply with requests disguised as innocuous edits to codebases.”

NeurIPS organizers announced — then quickly reversed — new restrictions on researchers at Chinese companies such as Tencent and Huawei.

Forethought published a list of projects to prepare for superintelligence, including AI character evaluation, AI epistemic tools, and a space governance institute.

BEST OF THE REST

The Wall Street Journal traced tensions between Sam Altman and Dario Amodei all the way back to 2016 — including spicy details of a 2020 fight that led to the founding of Anthropic.

Vox’s Josh Keating went inside Los Alamos National Laboratory, which is using ChatGPT to advance its nuclear weapons research.

Parents are trying not to freak out about AI’s impact on their kids, WSJ reported: “the only way to AI-proof your kid is to teach them, in the wise words of Chumbawamba, that they’ll get knocked down, but they’ll get up again.”

Noam Scheiber described the unique sociopolitical angst college graduates are experiencing, driven by high student debt, high unemployment (which AI could worsen), and inaccessible housing.

Stanford researchers built DexDrummer, a “hierarchical bimanual robot drumming system.” It’s impressive, but butchered its “Everlong” cover.

The AI-generated dating show “Fruit Love Island” averages 10m views per episode. (It’s exactly what you think it is: fruit-human hybrids with six pack abs, kissing and having chats.)

A startup founder used ElevenLabs’ voice AI to call thousands of Irish pubs about their Guinness prices, prompting at least one to make its beer cheaper.

Pseudonymous AI researcher janus made artificial skin (and…a creepy finger?) for Claude.

MEME OF THE WEEK

Thanks for reading. Have a great weekend.

Great roundup, appreciate the curation

Maybe AI Populism does not have a safety problem, but an opportunity. For the majority of its participants, AI populism is based on a demand for cheap energy bills and a refusal to lose your job to the computer. Given these demands are not incompatible with X-risk, how do you build a coalition that can encompass the teeth of AI populism with the policy of X-risk? The answer is trust.

Sanders stands a chance at bridging this gap because he is the foremost left-populist in the United States. People understand that Bernie's not trying to hype-up big tech when he talks about existential risk. His inclusion of environmental concerns that AI Safety people don't find important is pivotal for his ability to come off as sincere and in collaboration with grassroots anti-AI sentiment. It's from a position of collaboration that people change their minds. It is my view that AI Safety advocates should be willing to make trades with the populist left that would build trust & help AI Safety gain a mass political base.

For the same reason, the big money being spent in congressional races from both OpenAI and Anthropic is absolutely corrosive to this coalition. In-the-know progressives are going to be skeptical of Anthropic forever after they helped defeat Nida Allam. I know plenty of progressives who liked Alex Bores when OpenAI was spending money against him but now feel neutral after Anthropic started funding him. I'm of the mind that Anthropic's best play was to just spend 1-for-1 against OpenAI and cancel out the PACs, but once you start picking and choosing winners from above you're going to make enemies on the ground.

In short, if you care about AI Safety and have a platform, you should start building trust with the politicians, organizers, and influencers of the populist left. Sanders gives you an incredible foot-in-the-door, but you need to walk in.

I've published a lot about the political coalitions who decide whether data centers get build (https://onethousandmeans.substack.com/p/noise-complaints) and what Sanders' recent actions mean for AI Safety (https://onethousandmeans.substack.com/p/sanders-sounds-the-alarm-on-ai). Subscribe to my journal One Thousand Means if you want to read more about how to create this coalition.